Jensen Huang took the stage at San Jose’s SAP Center on March 16 and delivered one of Nvidia’s most packed keynotes yet. The headline number—$1 trillion in combined Blackwell and Vera Rubin purchase orders through 2027—set the tone immediately. From there, Huang walked through the Vera Rubin platform, now shipping, the first Groq-derived LPU, enterprise-grade agentic AI via NemoClaw, robotics partnerships, and a detailed preview of the 2028 Feynman architecture. Nvidia arrived at GTC 2026 not as a chip company but as the infrastructure backbone of the entire AI industry.

Jensen Huang explains his view of the world.

Jensen Huang opened GTC 2026 with a characteristically bold framing: Nvidia’s combined Blackwell and Vera Rubin purchase order pipeline now targets $1 trillion through 2027—double the $500 billion opportunity the company projected just a year ago. The central theme was agentic AI driving a fundamental shift in computing demand, with Nvidia repositioning itself as a full-stack AI infrastructure company rather than a GPU vendor.

Vera Rubin platform

Huang formally unveiled the Vera Rubin platform as a rack-scale supercomputer purpose-built for agentic AI. Vera Rubin pairs a new Nvidia-designed Vera CPU with the Rubin GPU architecture, delivering claimed performance Huang described as representing a 40 million times increase in compute over 10 years. Vera Rubin ships to customers later in 2026. Huang also previewed Kyber—the next rack architecture after Rubin—integrating 144 GPUs in vertical compute trays to boost density and reduce latency. Kyber arrives in Vera Rubin Ultra, targeted for 2027.

Groq 3 LPU

Huang unveiled the Nvidia Groq 3 Language Processing Unit—the first chip to emerge from Nvidia’s $20 billion Groq asset acquisition completed in December 2025. The Groq 3 LPU targets inference acceleration, with one core optimized specifically to accelerate GPU throughput. It ships in Q3 2026. The companion Groq 3 LPX rack houses 256 LPUs and sits alongside Vera Rubin rack-scale systems in data center deployments.

Feynman—2028 architecture

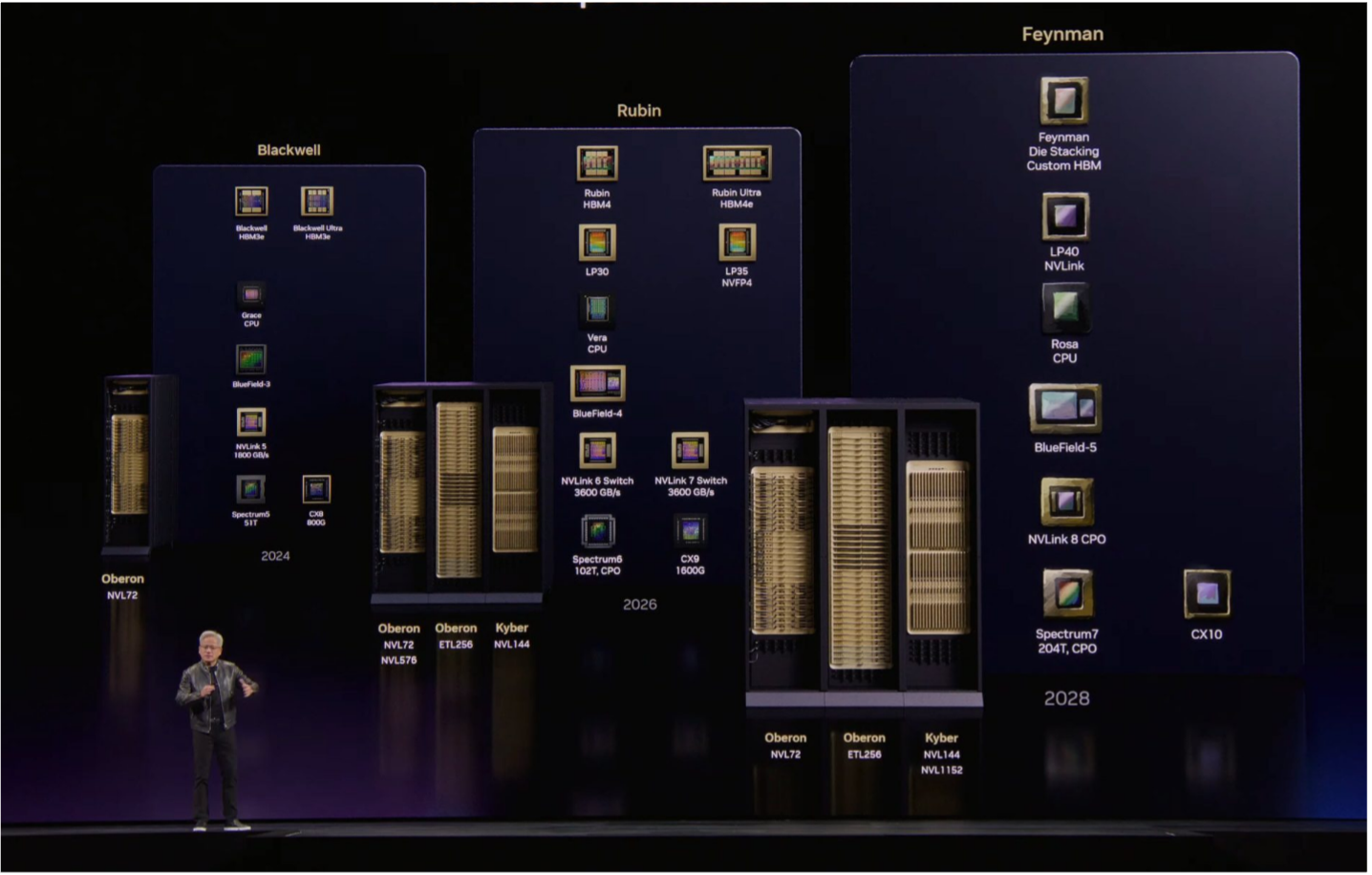

Huang confirmed Feynman, Nvidia’s 2028 GPU generation, introducing three headline innovations: 3D die stacking—the first use of stacked GPU dies in Nvidia silicon; custom HBM memory, likely a proprietary HBM4E or HBM5 variant; and fabrication on TSMC’s A16 1.6 nm node—Nvidia’s first 1 nm-class chip.

Figure 1. Nvidia maintains its yearly cadence with the announcement of the 2028 Feynman GPU.

The platform pairs a new Rosa CPU with the LP40 LPU, built jointly with the Groq team, and scales the Kyber rack architecture to NVL1152—eight times the NVL144 density of Rubin. Intel supplies EMIB advanced packaging for the I/O die.

OpenClaw and NemoClaw

Huang devoted significant time to OpenClaw—the viral agentic AI platform launched by Austrian developer Peter Steinberger in January, now an open-source project under OpenAI stewardship.

Figure 2. NemoClaw, available now. (Source: Nvidia)

Nvidia worked to make OpenClaw enterprise-secure and launched NemoClaw as its enterprise reference stack built on OpenClaw. Huang also announced the Nemotron Coalition—an open frontier model initiative including Perplexity, Mistral, Black Forest Labs, Cohere, and Reflection—to advance open-agentic AI models.

Robotics

Huang stated that every major robotics company now works with Nvidia, reinforcing the company’s push into physical AI and humanoid robotics that began at GTC 2025.

Figure 3. Disney’s new robotic character, Olaf. (Source: Nvidia)

DLSS 5

On the consumer side, Nvidia announced DLSS 5, targeting next-generation neural rendering for PC gaming—a relatively rare consumer-focused moment at a conference that has become predominantly enterprise and AI-oriented.

The big picture

Huang’s GTC 2026 message was clear: Nvidia has expanded from GPU supplier to AI infrastructure architect—covering silicon, networking, software, agentic platforms, and robotics. The $1 trillion pipeline claim, the Groq integration, the NemoClaw enterprise stack, and the Feynman roadmap together signal that Nvidia intends to compete across the entire AI value chain—not just at the chip level—through at least the end of the decade.

What do we think?

Nvidia’s GTC 2026 keynote confirmed what the supply chain data has been signaling for months—Nvidia is no longer competing chip-to-chip. It competes system-to-system, rack-to-rack, and now platform-to-platform. The Feynman roadmap through 2028, the Groq LPU integration, and the NemoClaw agentic stack give customers a decade-long reason to stay inside Nvidia’s ecosystem. For competitors, the window to disrupt at the silicon layer is narrowing fast.

GTC 2026 marks an inflection point in how AI infrastructure gets built and bought. Nvidia no longer sells GPUs—it sells compute factories, and customers are committing to them at a trillion-dollar scale. The shift from chip procurement to platform lock-in—anchored by NVLink, NemoClaw, Groq LPU inference, and a roadmap stretching to Feynman in 2028—means purchasing decisions made today bind data center operators for the rest of the decade. That dynamic reshapes the competitive landscape for every merchant silicon vendor, hyperscaler, and AI start-up simultaneously.

We will expand on all these announcements over the next several days since each one is pretty significant.

Long live the king.

LIKE WHAT YOU’RE READING? INTRODUCE US TO YOUR FRIENDS AND COLLEAGUES.