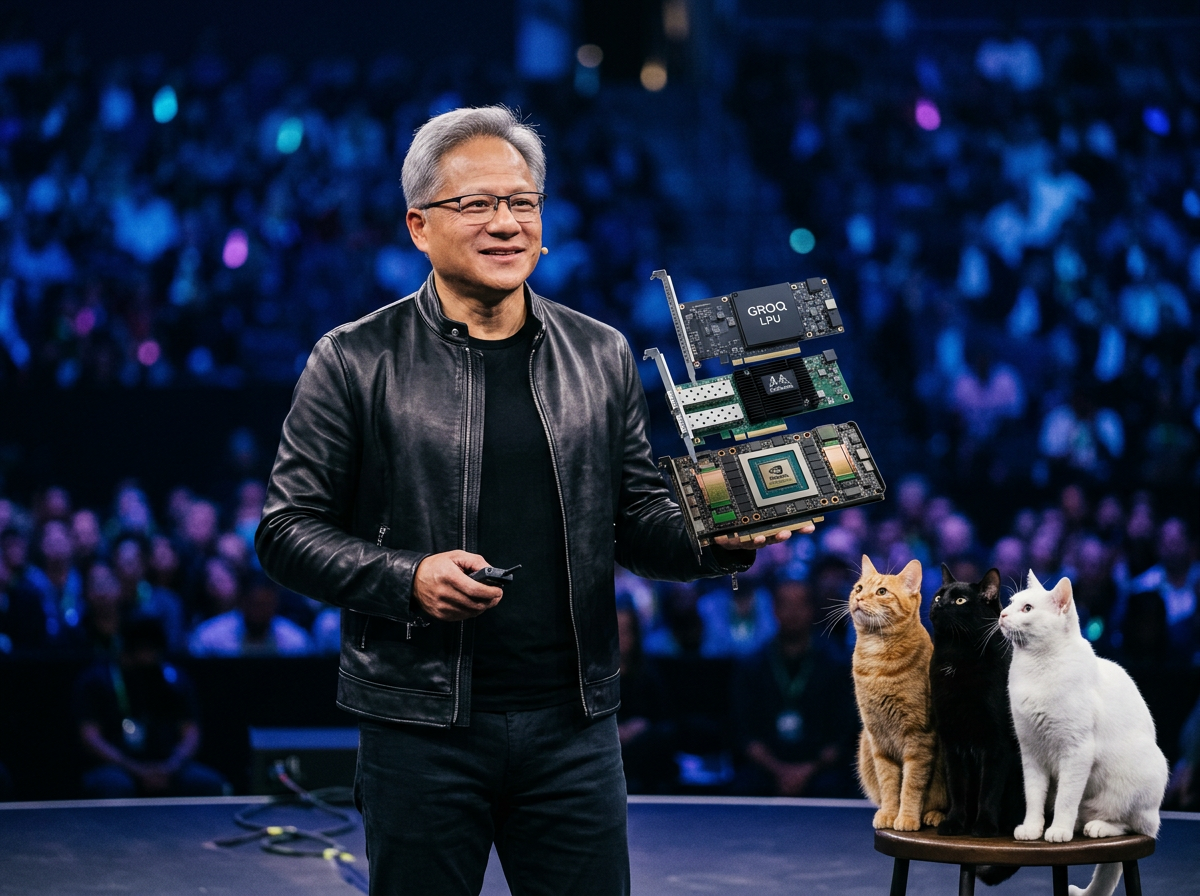

Nvidia’s $20 billion Groq acquisition looked like a bold bet when it closed in December 2025—and at GTC 2026, Jensen Huang explained exactly why he made it. Groq’s LPU doesn’t compete with Nvidia’s GPU —it completes them. Where Vera Rubin runs out of bandwidth at extreme inference speeds, Groq picks up the slack. The result is a combined system that delivers up to 35× better tokens per watt than Blackwell alone.

Nvidia completed a $20 billion asset acquisition of AI inference chip designer Groq in December 2025—its largest deal on record. At GTC 2026, Huang revealed the strategic logic: Groq’s LPU technology integrates directly into Nvidia’s rack architecture as a companion inference accelerator alongside Vera Rubin GPUs, addressing a specific bandwidth ceiling that GPUs alone cannot solve.

Huang laid out the rationale of the Groq deal without ambiguity. NVLink 72 dominates GPU throughput across standard workloads, but at extreme token generation speeds—1,000-plus tokens per second—it runs out of memory bandwidth. Groq’s LPU architecture, built around massive on-chip SRAM and compiler-scheduled deterministic execution, targets exactly that constraint. “It still doesn’t change the fact that we need a lot of memory,” Huang told the GTC audience. “And so we’re just going to add a whole bunch of Groq chips, which expands the amount of memory it has.”

Huang drew a direct parallel to Nvidia’s 2020 Mellanox acquisition. Mellanox built the networking technology that became the foundation of NVLink and InfiniBand—Nvidia’s dominant AI cluster interconnect. Groq, in Huang’s framing, extends Nvidia’s architecture the same way: An acquired company’s technology is absorbed into the platform as a core component rather than being operated as a stand-alone product.

The performance claim is significant. The Groq 3 LPX rack paired with Vera Rubin NVL72 delivers up to 35× higher tokens per watt at extreme inference speeds compared to Blackwell NVL72 alone. Huang made the workload segmentation explicit: Pure high-throughput training and batch inference stays on Vera Rubin; real-time agentic AI, conversational inference, and low-latency interactive workloads benefit from adding Groq LPUs to the data center alongside the GPU racks. The two systems complement rather than compete.

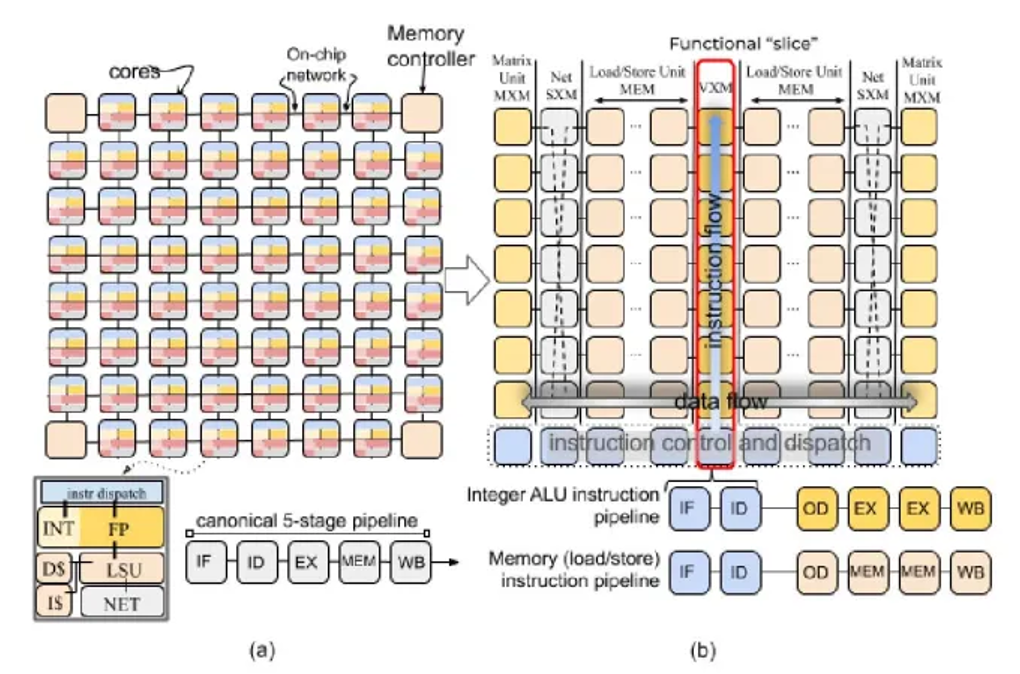

Figure 1. Groq’s LPU (a): The tiled architecture of a conventional multicore chip. (b): The flipped design of a TSP, where each vertical set of tiles is a homogeneous collection of functional units. (Source: Groq’s 2020 paper on TSP.)

The Groq 3 LPU—the first chip to emerge from the acquisition—ships Q3 2026. The companion Groq 3 LPX rack houses 256 LPUs and sits physically beside Vera Rubin rack-scale systems in data center deployments. Samsung manufactures the LP30 chip. Huang gave the Groq engineering team explicit recognition on stage, positioning them as a valued group now integrated inside Nvidia—consistent with his Q4 FY2026 earnings call comments where he first signaled the GTC announcement.

What do we think?

The Mellanox analogy is not rhetorical—it is a blueprint. Mellanox turned Nvidia from a chip vendor into a networking company. Groq turns Nvidia from a training-first GPU vendor into an inference-optimized full-stack platform. The LPU fills the one gap Nvidia’s GPU architecture cannot close at extreme token speeds. That gap is exactly where the inference economy is heading.

The Groq integration signals an inflection point in AI infrastructure economics. For three years, inference cost was a GPU problem—solved by stacking more H100s or B200s. The Groq LPX rack reframes that equation: At extreme token speeds, the bottleneck is bandwidth and memory, not FLOPS. Nvidia now sells a combined GPU-LPU system purpose-built for the inference economy—where tokens, not training runs, generate revenue. That architectural shift redefines what a data center AI purchase looks like from this point forward.

Huang gave the Groq team visible credit on stage, calling out their work specifically and positioning them as a valued engineering group now inside Nvidia—consistent with his public comments at the Q4 earnings call where he first signaled the GTC reveal.

We are tracking the 133 companies making AI processors and their acquisitions (25 so far) in our AI Processor Tracker service, which will be announced soon, and in our current AI processor annual market report. We also offer a quarterly update service on AIPs—you can find a free summary here.

WHAT DO YOU THINK? WORTH READING, VALUABLE INSIGHTS? TELL YOUR BUDDIES.