Light-based computing just got real—though with important caveats worth understanding. Q.ANT, a Stuttgart, Germany, start-up founded in 2018 as a spin-off from Trumpf, the German industrial laser giant, builds what it calls the world’s first commercially shipping all-photonic AI coprocessors. Demand already outstrips production. Running at 30 W against Nvidia’s 700–1,000 W GPUs, Q.ANT’s chips are installed at two of Europe’s leading supercomputing centers, with US data centers lining up. The photonic compute race is broader than most people realize, and Europe is leading it.

Q.ANT’s CEO Michael Förtsch showing the NPU2 AIB. (Source: Q.ANT)

Q.ANT builds photonic co-processors for AI data centers and hit a production ceiling. Customer demand exceeded what its Stuttgart, Germany, pilot line could deliver—a notable constraint for a 100-person company that shipped its first commercial systems less than three years after founding.

The company spun out of Trumpf in 2018. Trumpf, founded by Christian Trumpf in 1923, is a privately held German industrial technology company in Ditzingen, Germany, near Stuttgart, generating around €5 billion (~US $5.8 billion) in annual revenue in industrial lasers and photonics equipment. Rather than build a new internal division, Trumpf created Q.ANT as a stand-alone start-up, transferring decades of photonics manufacturing expertise—and an early equity stake—to give the new company a head start on the hardest part of the problem: making light do reliable, precise work at manufacturing scale.

Q.ANT operates its all-photonic processor line at the Institute for Microelectronics Stuttgart (IMS CHIPS), a non-profit academic research foundation on the Stuttgart-Vaihingen campus. The facility dates to the early 1980s and ran as a CMOS research line for decades under the University of Stuttgart ecosystem and Baden-Württemberg’s innovation alliance. Q.ANT repurposed the existing 90 nm-era equipment rather than building a greenfield fab, investing €14 million (~US $16.2 million) in new machinery and thin-film lithium niobate (TFLN) processes to establish a pilot line capable of 1,000 wafer starts per year. A commercial fab would have cost billions to retool; an academic research facility proved far more accessible.

“We used the tools already there but brought our proprietary processes to the fab. We control everything from wafer level—we build the TFLN wafers, have a design-and-layout team, a fabrication team, package the chips, build the co-processors, build the computers, and even build the software stack. We walk it all the way through,” said CEO Michael Förtsch at SEMICON Europa.

That vertical integration yields roughly 50,000 to 60,000 chips per year—enough to support 100 to 500 server deployments. Q.ANT already has processors running at the Leibniz Supercomputing Centre (LRZ) in Munich and the Jülich Supercomputing Centre (JSC) near Düsseldorf, Germany, with two additional HPC centers receiving installations in Q1 2026 and a commercial cloud deployment imminent.

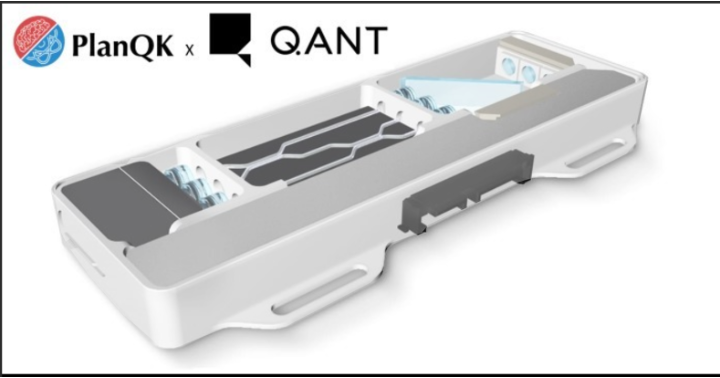

Figure 1. The Q.ANT NPU 2. (Source: Q.ANT)

One important caveat about Q.ANT’s claim to be “first”: the company targets nonlinear AI workloads—image, audio, video processing, physics simulations, and sensor fusion—rather than LLM inference, where Förtsch acknowledges the return on investment remains elusive. Q.ANT is a co-processor that runs alongside conventional CPUs and GPUs via PCIe, not a stand-alone GPU replacement. That is a meaningful distinction. Lightmatter published a breakthrough photonic processor paper in Nature in April 2025, demonstrating transformers and ResNet running on a photonic chip without modification, and MIT researchers have demonstrated fully integrated photonic neural network processors on-chip—though both remain pre-commercial. Q.ANT’s claim to primacy holds specifically for commercially available, rack-deployable all-photonic compute hardware.

Q.ANT also uses a fundamentally different memory approach than conventional AI processors. Instead of integrating large on-chip memory such as SRAM or HBM, the photonic processor relies on external DRAM (DDR-class memory) managed by a host system. The chip focuses on executing matrix–vector operations in the optical domain, while storing model weights, inputs, and outputs in standard electronic memory. This design avoids the power and complexity of large on-chip memory arrays.

The processor connects through a PCIe host-attached model, where data streams from CPU-controlled DRAM into the photonic core, gets processed optically, and returns as digital output. As a result, performance depends more on data movement and I/O efficiency than memory bandwidth, shifting the bottleneck away from memory capacity toward how efficiently data feeds the compute engine.

LRZ Chairman Dieter Kranzlmüller evaluated Q.ANT’s photonic processors against Nvidia GPUs and reported a substantial performance advantage in matrix–vector multiplication, on the order of roughly 50 times compared with conventional electronic approaches. He also highlighted a significant efficiency gap: The Q.ANT device operates at about 30 W, while comparable Nvidia accelerators draw between 700 and 1,000 W. Given that power consumption now constrains AI system scaling, this difference in energy profile carries major implications for future deployments. Kranzlmüller sees a plausible path to 1,000× performance gains as the technology matures, though he stops well short of calling it “revolutionary” today. The software barrier—historically the stumbling block for non-CUDA platforms—gets a direct answer from Förtsch. Developers use Python, Rust, C, or C++ in standard Jupyter notebook environments; Q.ANT handles the translation layer invisibly. The company ships a new processor generation each November, targeting 100× performance improvement per generation.

To understand Q.ANT’s position, the photonic landscape needs a clear split. Photonic compute companies—where light does the arithmetic—include Q.ANT, Lightmatter (which published the 2025 Nature photonic processor paper), Salience Labs (UK), Akhetonics (Germany, all-optical including optical memory, €6M seed 2024), and optoML (Switzerland, stealth). Photonic interconnect companies—where light moves data between conventional chips—include Lightmatter’s Passage M1000 platform ($4.4 billion valuation, 114 Tb/sec bandwidth claim), Ayar Labs (optical I/O chiplets, backed by AMD, Intel, and Nvidia), Celestial AI (acquired by Marvell for $3.25B in late 2025), nEye Systems (wafer-scale optical circuit switches, backed by Alphabet, Microsoft, Nvidia), plus the CPO programs at Nvidia, Intel, Broadcom, and Marvell. The two categories share a name but not a product.

Roughly six companies pursue photonic compute; 10 or more pursue photonic interconnects. Four of the five photonic compute companies are European—Q.ANT and Akhetonics in Germany, Salience Labs in the UK, and optoML in Switzerland—with Lightmatter the sole US player. That European concentration traces directly to the funding structure. EU public grants through Horizon Europe, Germany’s Federal Ministry of Research (BMFTR), and regional development banks like L-Bank provide patient capital that tolerates 10-year development timelines—a match for photonics research that US venture capital, demanding faster returns, cannot make. Once working silicon arrives, private VCs step in: Q.ANT’s $80 million total raise came primarily from Cherry Ventures, UVC Partners, and imec.xpand, with participation from Duquesne Family Office, the investment vehicle of billionaire Stan Druckenmiller. Trumpf remains a financial backer, providing both credibility and equipment access.

Q.ANT seeks foundry partnerships to scale beyond Stuttgart. UMC recently announced a TFLN manufacturing partnership with HyperLight, Wavetek, and Jabil, signaling that the broader TFLN supply chain is developing. The company targets photonic co-processors as standard data center infrastructure by 2030, shipping a new chip generation each November.

What do we think?

Q.ANT occupies a real but narrow beachhead. Its primacy claim—first commercial all-photonic computer hardware in a rack—holds, but the qualifier matters: It targets nonlinear HPC workloads, not LLM inference, and ships in the hundreds of servers rather than thousands. The 30 W versus 1,000 W comparison with Nvidia is arresting and genuine. The software story is more credible than most non-CUDA challengers manage. The production ceiling is a quantity problem—it confirms paying customers exist. Whether photonic compute scales to general AI workloads within this decade remains the open question.

Q.ANT’s production ceiling marks an inflection points in AI infrastructure thinking, if not yet in AI infrastructure itself. For the first time, a photonic compute product faces demand constraints rather than technology or adoption ones—the signal that a market has crossed from experimental to early-real. With global data center electricity consumption tracking to surpass Japan’s entire national grid by 2026, the energy calculus forces architects to look beyond CMOS. A 30 W photonic co-processor running alongside a 1,000 W GPU is not a replacement story yet—but the direction of travel is no longer theoretical.

WHAT DO YOU THINK? GOOD ENOUGH TO TELL YOUR FRIENDS ABOUT IT? YOU HAVE OUR PERMISSION TO SHARE IT.

Article Update

Q.ANT Gen 2 NPU deployment at LRZ

Q.ANT has deployed its Gen 2 Native Processing Units (NPUs) at the Leibniz Supercomputing Centre (LRZ) in Munich, advancing photonic co-processing from lab validation into production HPC operations. The Gen 2 NPUs install via standard PCIe interfaces and run alongside existing CPUs and GPUs under AI and scientific simulation workloads, requiring no architectural overhaul of the host system.

Benchmark results at LRZ show the Gen 2 architecture delivers 50× higher matrix multiplication throughput, 25× faster inference on ResNet-18, and 6× lower energy consumption versus Gen 1. Enhanced analog units handle nonlinear functions natively, reducing parameter counts and training depth. Q.ANT’s TFLN photonic integrated circuits execute operations directly in the optical domain, eliminating on-chip transistor switching, heat generation, and the associated cooling overhead.

LRZ operates large-scale scientific simulations and AI research under demanding production standards—not controlled lab conditions. That context matters. Drug discovery, materials design, and adaptive optimization workloads drove the benchmark suite, making the efficiency gains directly applicable to real compute-intensive problems. Germany’s Federal Ministry of Research (BMBF) supported the collaboration.

CEO Michael Förtsch framed the deployment plainly: Brute-force transistor scaling converts electricity into heat without solving the underlying compute problem. Q.ANT’s position is that photonic co-processing offers a different scaling axis—one that increases throughput without proportionally increasing energy draw.

LRZ Chairman Professor Dieter Kranzlmüller confirmed the evaluation runs under real production workloads, not synthetic benchmarks, and covers performance, precision, and energy efficiency across heterogeneous HPC architectures. Q.ANT has now crossed from demonstration to operational deployment at one of Europe’s premier supercomputing facilities.

What do we think?

The LRZ deployment is the most credible third-party validation Q.ANT has achieved. Production HPC environments impose constraints—reliability, integration, precision— hat lab benchmarks do not. A 6× energy reduction under real workloads is a result worth tracking. JPR will watch whether the Jülich Supercomputing Centre partnership and the two additional Q1 2026 HPC installations produce comparable numbers.