“Larrabee silicon and software development are behind where we hoped to be at this point in the project,” said Intel spokesperson Nick Knupffe. “As a result, our first Larrabee product will not be launched as a standalone discrete graphics product.” (December 4, 2009.)

After three years of bombast, Intel shocked the world by canceling Larrabee. Instead of launching the chip in the consumer market, Intel will make it available as a software development platform for both internal and external developers. Those developers can use it to develop software that can run in high-performance computers.

The following is an excerpt from an article in this week’s edition of Jon Peddie Research’s TechWatch bi-weekly report.

How it began

Larrabee was started in 2006 partially due to a conversation at the COFES conference between an industry consultant and some Intel guys. By 2007, the industry was pretty much aware of Larrabee, although details were scarce and only came dribbling out from various Intel events around the world. In August of 2007 it was known that the product would be x86, capable of performing graphics functions like a GPU, but not a “GPU” in the sense that we know them, and was expected to show up some time in 2010, probably 2H’10.

Speculation about the device, in particular how many cores it would have, entertained the industry, and maybe employed a few pundits, and several stories appeared to add to the confusion. Our favorite depiction of the device was the blind men feeling the elephant – everyone (outside Intel) claimed to know exactly what it was – and no one knew what it was beyond the tiny bits they were told.

What we did know was that it would be a many-core product (“Many” means something over 16 this year), would have a ring-communications system, hardware texture processors (a concession to the advantages of an ASIC), and a really big coherent cache. But most importantly it would be a bunch of familiar x86 processors, and those processors would have world-class floating point capabilities with 512 bit vector units and quad threading.

Who asked for Larrabee anyway?

Troubled by the GPU’s gain in the share of the silicon budget in PCs, which came at the expense of the CPU, Intel sought to counter the GPU with its own GPU-like offering.

There has never been anything like it and as such there were barriers to break and frontiers to cross, but it was all Intel’s thing. It was a project. Not a product. Not a commitment, not a contract, it was and is, an in-house project. Intel didn’t owe anybody anything. Sure they bragged about how great it was going to be, and maybe made some ill advised claims about performance or schedules, but so what? It was for all intents purposes an Intel project – a test bed, some might say a paper tiger, but the demonstration of silicon would make that a hard position to support.

So last week most of the program managers had tossed their red flags on the floor and said it’s over. We can’t do what we want to do in the time frame we want to do it. And, the unspoken subtext was, we’re not going to allow another i740 to ever come out of Intel ever again.

Now the bean counters took over. What was/is the business opportunity for Larrabee? What is the ROI? Why are we doing this? What happens if we don’t? A note about companies – this kind of brutal, kill your darlings examination is the real strength of a company.

Larrabee as it had been positioned to date would die. It would not meet Intel’s criteria for price-performance-power in the window it was projected – it still needed work – like Edward Scissorhands -it wasn’t finished yet. However, the company has stated that it still plans to launch a many-core discrete graphics product, but won’t be saying anything about that future product until sometime next year.

And so it was stopped. Not killed – stopped.

Act II

Intel invested a lot in Larrabee in dollars, time, reputation, dreams and ambitions, and exploration. None of that is lost. It doesn’t vanish. Rather, that work provides the building blocks for the next phase. Intel has not changed its investment commitment on Larrabee. No one has been fired, transferred or time shared. In fact there are still open reqs.

Intel has built and learned a lot. More maybe than they originally anticipated. Larrabee is an interesting architecture. It has a serious potential and opportunity as a significant co-processor in HPC, and we believe Intel will pursue that opportunity. They call it, “throughput computing.” We call “throughput computing” a YAIT ; yet another Intel term.

So the threat of Larrabee to the GPU suppliers shifts from the graphics arena to the HPC arena – more comfortable territory for Intel.

Next?

This is not the end of Larrabee as a graphics processor – this is a pause. If you build GPUs enjoy your summer vacation, the lessons will begin again.

This is not the end of Larrabee as a graphics processor – this is a pause. If you build GPUs enjoy your summer vacation, the lessons will begin again.

Or will they?

Maybe the question should be – why should they?

Remember how we got started – one of the issues was the gain in silicon budget in PCs by the GPU at the expense of the CPU. There are multiple parameters on that including:

- Revenue share of the OEM’s silicon budget

- Unit share on CPU-GPU shipments

- Mind share of investors and consumers

- Subsequent share price on all of the above.

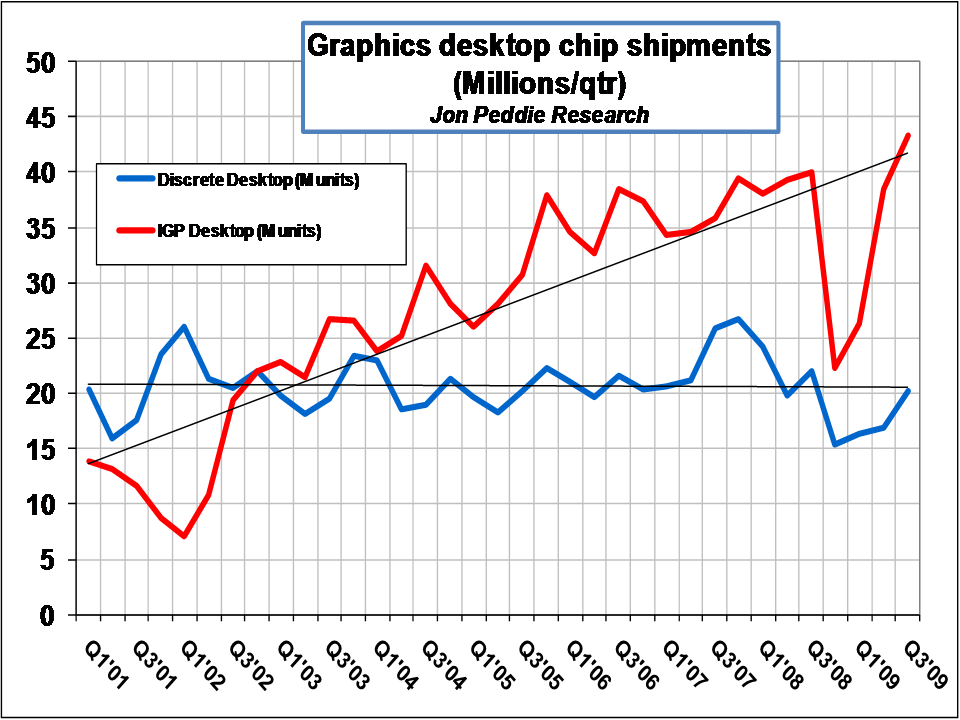

The discrete GPU unit shipments have a low growth rate. ATI and Nvidia hope to offset that with GPU compute sales; however, those markets will be slower to grow than have been the gaming and mainstream graphics markets of the past ten years.

Why would any company invest millions of dollars to be the third supplier in a flat to low growth market? One answer is the ASP and margins are very good. It’s better for the bottom line, and hence the PE to sell a few really high valued parts than a zillion low margin parts.

Why bother with discrete?

GPU functionality is going in with the CPU. It’s a natural progression of integration – putting more functionality in the same package. Next year we will see the first implementations. They will be mainstream in terms of their performance and won’t be serious competition in terms of performance to the discrete GPUs, but they will further erode the low end of the discrete realm. Just as IGPs stole the value segment from discrete, embedded graphics in the CPU will take away the value and mainstream segments, and even encroach on the lower segments of the performance range.

Relative growth of discrete desktop GPUs to integrated graphics

This means the unit market share of discrete GPUs will decline further. That being the case, what is the argument for investing in that market?

These are the questions Intel will have to wrestle with over the next six to twelve months.

Don’t be too quick to come up with the answer for them — 83 million discrete parts a year with a good ASP and margin is a very attractive $10 billion business.

Management of the server cash cow business.

Last year after the Intel-Dreamworks announcement, when Dreamworks pledged allegiance to Intel’s processors and expressed their eagerness to put Larrabee to work we asked a senior Intel executive about the risk of losing their $100,000 server business to a couple of $500 Larrabee chips. He said, in a low voice, head slightly bowed, and clearing his throat, “yes, ..we’re, ah, trying to manage that…”

Problem solved. Larrabee is no longer a threat to the server business, it simply augments it. No doubt the Xeon folks will be happy to hear that.

Conclusion

Intel has made a hard decision and we think a correct one. Larrabee silicon was pretty much proven, and the demonstration at SC09 of measured performance hitting 1 TFLOPS (albeit with a little clock tweaking) on a SGEMM Performance test (4K by 4K Matrix Multiply) was impressive. Interestingly it was a computer measurement, not a graphics measurement. Maybe the die had been cast then (no pun intended) with regard to Larrabee’s future.

Intel’s next move will be to make Larrabee available as an HPC SKU software development platform for both internal and external developers. Those developers can use it to create software that can run in high-performance computers.

We think this makes a lot of sense, and leaves the door open for Intel to take a second run at the graphics processor market. The nexus of compute and visualization, something we discussed at Siggraph, is clearly upon us, and it’s too big and too important for Intel not to participate in all aspects of it.