MediaTek Analyst Day pointed toward a hybrid model in which cloud and local processing coexist, with agentic AI making local systems more useful and easier to justify economically. The more interesting points were about utility: latency, offline use, cross-device context, and building hardware for future local models.

(Source: JPR)

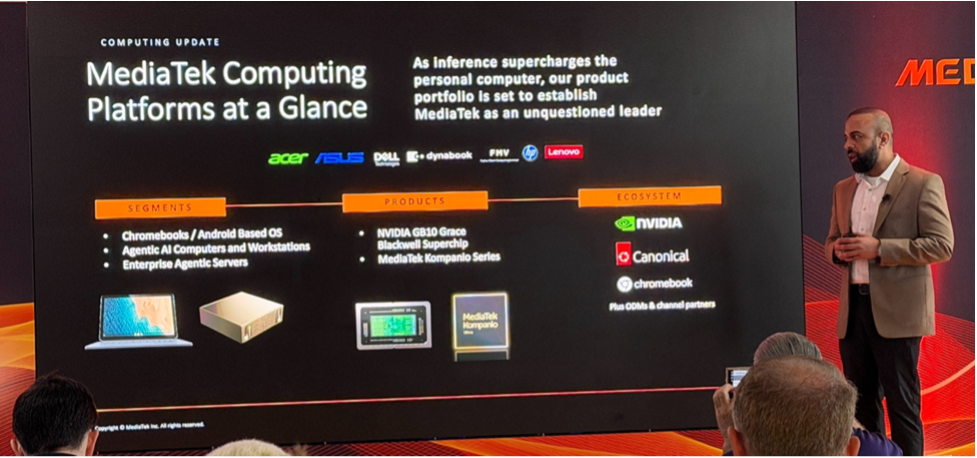

At MediaTek Analyst Day 2026, three themes stood out: where computing is heading, what consumer AI devices are actually for, and which AI features are genuinely useful. MediaTek’s VP of Computing Platforms, PD Rajput, laid out the landscape, followed by a panel with Google’s John Solomon (VP/GM for Laptops, Tablets, Education, and Android Enterprise) and Lenovo’s Benny Zhang (GM, Chromebook BU). The conversation moved beyond feature lists toward a more grounded look at what AI on consumer devices can realistically deliver.

1. Consumer AI is settling into a hybrid model

The clearest shared view was that consumer AI will not be purely local or purely cloud-based. Rajput said, “There’s a hybrid AI loop” and argued that running AI locally increasingly “does make sense” when the economics and privacy requirements are right, while cloud remains necessary where it has to be used. Solomon put it slightly differently, saying the market is developing on “a parallel track,” with inferencing growing “both in the data center and at the edge.”

2. Agentic AI is making local computing more useful

Rajput kept returning to AI moving beyond passive assistance and into agentic workflows. In his keynote, he said, “With agentic AI, the inflection point is here,” and he described a future of “agents doing computer use, tool use, driving your workflows.” During the panel, he added that when agents are “doing things on your behalf, most of that hopefully should be local.”

We are at last moving beyond weakly useful branded AI features and towards systems that can act, coordinate, and execute, and with that comes a meaningful shift in how companies like MediaTek are framing the category as they stake a claim to category (and thought) leadership. Solomon pushed in the same direction, saying the industry should spend less time talking about AI as a label and more on “whether devices actually help users get things done.”

3. The economics of local AI are becoming easier to explain

Rajput’s description of local systems as “local token generators” was one of the more interesting ideas of the day. Once a user or enterprise maps a recurring workflow to a specific model, buying local compute capacity may beat paying for repeated cloud inference. As he put it: “Once you buy it, keep generating tokens as much as you want. The cost economics just work out so much better.” He tied that to the increased clarity of the “threshold on when local token generation makes sense versus going to cloud versus going on-prem.”

Figure 1. MediaTek’s VP of Computing Platforms, PD Rajput. (Source: JPR)

4. The real test for local AI is latency, offline use, and context

As one might expect from Google, Solomon pushed the idea that “local equals private” is not automatically true. “The No. 1 criterion we look at is latency,” he said, with “offline” a close second, alongside “context-aware across applications”—that is, avoiding unnecessary cloud round trips when two apps can share awareness locally. He flagged the trade-offs: “You’re not going to have the highest performing models with the best reasoning,” and local processing costs battery life.

The panel did not present on-device AI as automatically better. The argument was that local makes sense where speed, continuity, and convenience are the priorities. Lenovo’s Zhang made the same point from a product perspective, saying customers “don’t need to see us from cloud or from the client. We just have a great experience.”

5. Consumer AI products are being designed for models that do not exist yet

Notebook and device makers are not designing around today’s AI models alone. Solomon said Google and its partners are “building in capacity for more local [AI],” treating it as “insurance” or “headroom” for stronger local AI use over time. Rajput made the same point from the model side: “Every 12 months, a 100B model we have is now a 10B model, or an 8B model, or a 6B model,” and those models are “primed to be running on-device.”

Memory, efficiency, and platform planning kept surfacing because the expectation is not just that devices should handle today’s AI workloads; they need headroom to become more useful as smaller models improve and more tasks shift locally.

What do we think?

MediaTek, Google, and Lenovo were aligned on where consumer AI is heading and which products should be built around it. We have had reasonable skepticism about the AI PC trend, because much of it has amounted to branded applications with limited utility. Some of that skepticism still remains. But the discussion pointed to a more substantive next phase: agents that can “do things on your behalf,” better orchestration across devices, and decisions about what runs locally versus in the cloud. If vendors can deliver those benefits at consumer price points and with sensible memory constraints/price, AI PCs may start to look like a genuinely compelling class of machine rather than a marketing category.

One thing that was very clear was the respect Lenovo and Google had for MediaTek. No longer seen as just a chip supplier, MediaTek is taking a place among the industry leaders and is setting direction, not just being a fast follower. This has been further illustrated in Nvidia’s relationship with MediaTek. We expect to see a much stronger marketing presence from the company.

LIKE WHAT YOU’RE READING? INTRODUCE US TO YOUR FRIENDS AND COLLEAGUES.