3D streaming infrastructure provider Miris announced the launch of a public beta for its new 3D asset streaming platform. Miris is building the infrastructure to deliver 3D at Internet scale. As a managed service, 3D content owners can upload a 3D asset to Miris, enabling them to stream that asset to any device, instantly and at high-fidelity, at costs comparable to video. This unlocks a wide range of use cases from retail product configurators, architectural visualization, digital twins, and interactive retail, among others.

Miris is growing a new concept for transforming and delivering 3D content on the Web. (Source: Miris)

It’s been a long evolutionary development process for 3D on the Web, starting decades ago with the advent of VRML and progressing to O3D and WebGL, and advancing to WebXR and WebGPU. With each step forward, 3D has become richer and easier to manage. A new 3D streaming infrastructure provider, Miris, is determined to leave its mark by overcoming the hurdle of distributing 3D quickly, at high quality, to as many people as possible, and without breaking the bank.

Today, creators find themselves with one of two options for delivering/receiving robust content on the Web. They’re either using common formats like glTF, or they’re spinning up cloud infrastructure to pixel-stream their content. Both options have trade-offs. The Web download approach offers stable standards, like glTF, but sacrifices visual quality or speed as the content downloads. On the other hand, pixel streaming provides high-quality visuals but at a high GPU cost—and a large one at that with consumer-grade scaling. So, trade speed for fidelity, or fidelity for reach, says Will McDonald, chief product officer at Miris, a start-up 3D streaming infrastructure company.

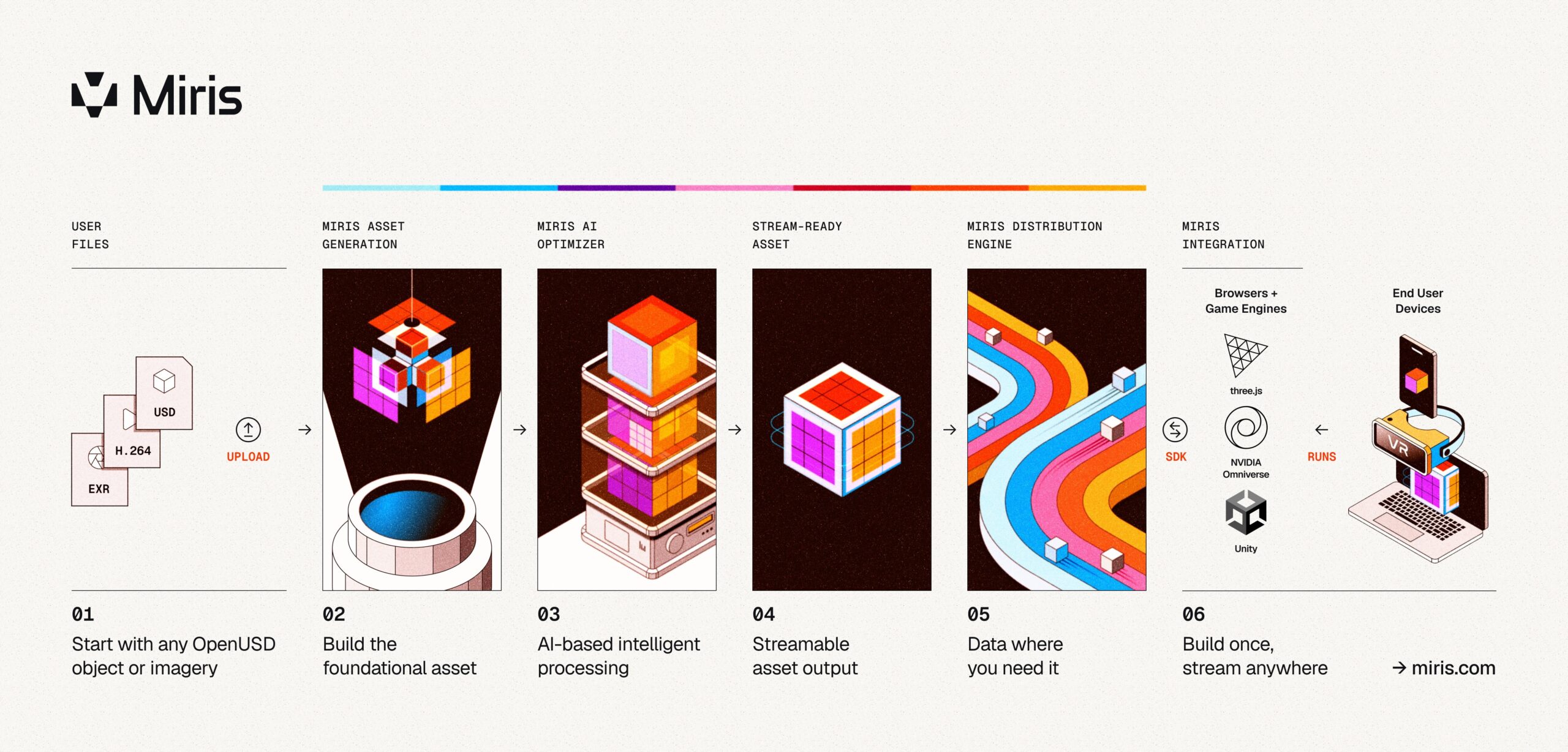

Start-up Miris takes an atypical approach to 3D asset delivery, applying the same paradigm shift to spatial content that adaptive streaming brought to video, McDonald says. Instead of downloading complete assets or renting cloud GPUs to render frames remotely, Miris uses adaptive spatial streaming that reconstructs the data for end-user devices in real time.

The company says its solution solves the four main bottlenecks plaguing high-fidelity 3D imagery on the Web:

As a managed service, 3D content owners upload a 3D asset to the Miris asset generation system, and run it through Miris’ CoreWeave cloud with Nvidia-based infrastructure to convert it into the Miris streamable format. As part of the process, the assets are optimized with AI and ML using the GPUs.

Content loads in under a second—Miris says it is aiming for 200 ms or less in most conditions—and refines it progressively.

When completed, developers load the Miris assets into their applications via the Web SDK.

After processing the image, the Miris distribution engine outputs the volumetric content, which can be streamed and distributed across multiple platforms and devices—at a cost that is comparable to video, Miris states, though actually costs have not been shared yet. The asset scales to millions of concurrent users at content delivery network (CDN) economics, without any additional infrastructure costs through the company’s cloud-based platform, the company adds. The platform preconditions the 3D asset for on-demand delivery, transmitting only necessary geometric and texture data in view, so the spatial data renders immediately and refines progressively as more data arrives. It also adapts to network conditions, so assets remain responsive and detailed while minimizing memory requirements.

“So, we’re really in a consumption-based sort of pattern in terms of we’re only having customers pay for what they actually actively stream, in effect, treating it similar to video economics,” adds McDonald.

The Miris architecture diagram. (Source: Miris)

For now, the system supports images generated using the OpenUSD format.

According to Miris, even a 500 MB product model will load and respond as quickly as a simple 5 MB scene via the platform.

“We’re putting GPUs at the front of the process rather than at the end, which is important to scale,” McDonald explains, enabling the 3D content to be sent to billions of people in parallel by removing the per-user GPU constraint with other options.

According to McDonald, when a person zooms in, material quality and fine details can be seen, and when they zoom out of the asset, those details get kicked out of memory.

“This ends up being really important for things like physical AI and simulation, where you’re really trying to retain as much memory as possible and be as efficient as possible. Because we’re streaming, we can easily evict things out of memory, load things into memory, and be highly efficient relative to what you’re looking at,” says McDonald.

Material details including the sheen in this jacket are visible in this asset. (Source: Miris)

There’s a wide variety of assets that can benefit from streaming in this way, opening the door to a number of different efficiencies that apply across numerous verticals such as interactive retail, physical AI, digital twins, architectural visualization, and more.

Interested parties can sign up for the company’s public beta here, giving them full platform access Those with beta access can use Miris with sample assets or their content (up to 10 GB).

LIKE WHAT YOU’RE READING? TELL YOUR FRIENDS; WE DO THIS EVERY DAY, ALL DAY.