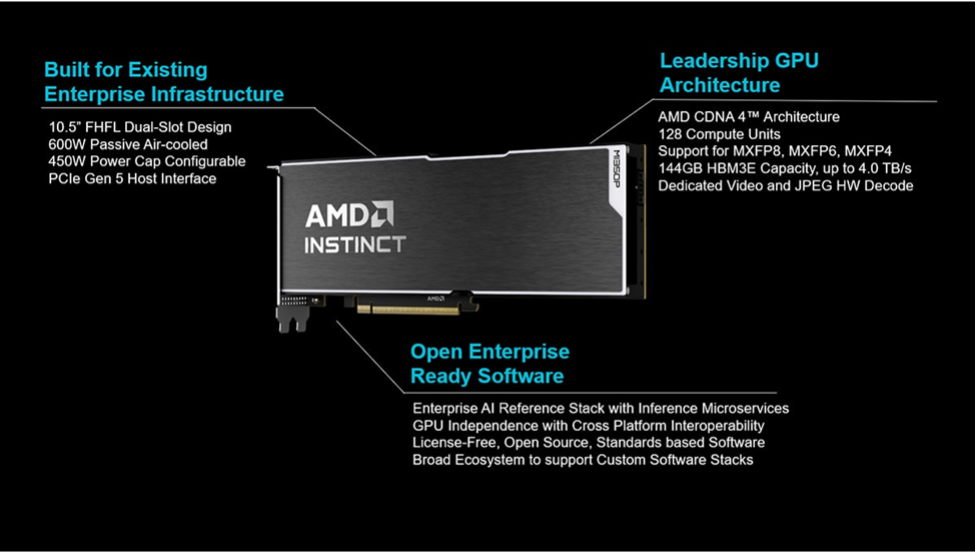

AMD introduced the Instinct MI350P PCIe accelerator to reduce infrastructure constraints in enterprise AI deployment. Many organizations face tradeoffs between cloud-based inference and the cost of upgrading on-prem systems to support large accelerator platforms. The MI350P is a dual-slot, air-cooled PCIe card designed for standard servers, enabling deployment without changes to power, cooling, or rack configurations.

The MI350P targets inference workloads, including agentic AI and RAG pipelines. It is not intended to replace dedicated GPU clusters but to extend existing CPU-based systems with incremental acceleration. This approach allows enterprises to scale AI workloads within current infrastructure, avoiding the capital and operational requirements of full GPU platform deployment.

The hardware design reflects that positioning. The card supports up to eight accelerators per air-cooled system, enabling incremental scaling rather than wholesale redesign. AMD built the MI350P with high-bandwidth memory and support for modern AI precision formats, including MXFP4, MXFP6, FP8, and INT8. These formats allow organizations to optimize performance and memory utilization depending on workload requirements. The card delivers an estimated 2,299 TFLOPS and peaks near 4,600 TFLOPS at lower precision, with 144GB of HBM3E memory and bandwidth reaching 4TB/s.

Those specifications matter less as raw numbers and more as indicators of efficiency. Enterprises increasingly prioritize throughput per watt, deployment simplicity, and cost predictability. AMD addresses those factors by combining sparsity acceleration with support for multiple precision formats. This approach reduces memory overhead and improves inference efficiency without requiring extensive tuning or architectural changes.

The software stack reinforces that strategy. AMD integrates the MI350P into an open ecosystem that includes Kubernetes GPU Operator support, AMD Inference Microservices, and compatibility with frameworks such as PyTorch. Enterprises can migrate workloads with minimal code changes, reducing the operational burden associated with new hardware deployment. AMD also distributes its enterprise AI reference stack as open-source software, eliminating licensing costs and improving transparency.

That open approach differentiates AMD from competitors that rely on vertically integrated ecosystems. Instead of locking customers into proprietary frameworks, AMD emphasizes interoperability and flexibility. Enterprises can integrate the MI350P into existing pipelines, tools, and orchestration environments. This lowers switching costs and reduces long-term dependency risks.

The product launch aligns with broader shifts in AMD’s business performance. Recent financial results highlight a transition in revenue drivers, with the Data Center segment emerging as the primary source of growth. Revenue reached $10.3 billion in the first quarter, supported by a 57% year-over-year increase in Data Center revenue. EPYC CPUs and Instinct GPUs drove that expansion, reflecting rising demand for AI infrastructure.

Client and gaming segments also contributed to growth, though at a slower pace. Ryzen processors gained share in the client market, while Radeon GPUs supported gaming revenue. However, the narrative now centers on AI. Enterprise and hyperscale customers are reallocating budgets toward AI infrastructure, and AMD has positioned its CPU and GPU portfolio to capture that shift.

Lisa Su emphasized the role of agentic AI in driving demand. Workloads have shifted toward inference-heavy applications, increasing the importance of CPUs and balanced compute architectures. AMD benefits from its strength in CPUs while continuing to expand its GPU footprint. This dual capability allows AMD to participate across multiple layers of the AI stack.

The market response reflects that positioning. AMD’s stock has climbed sharply over the past year, supported by strong execution and rising AI demand. Investors view the company as a beneficiary of the transition toward distributed AI workloads, particularly as enterprises seek alternatives to cloud-only strategies. Forecasts for the next quarter point to continued growth, with revenue expected to reach approximately $11.2 billion.

The MI350P fits directly into that trajectory. It extends AMD’s reach into enterprise environments that previously lacked the infrastructure for large-scale GPU deployment. By enabling inference within existing data centers, AMD reduces barriers to adoption and accelerates time to deployment. Organizations can move from pilot projects to production systems without major architectural changes.

This approach also aligns with evolving enterprise priorities. Companies want to control data locality, manage costs, and maintain flexibility in their AI strategies. Cloud-based inference introduces variable costs and potential privacy concerns. Dedicated GPU clusters require capital investment and operational complexity. The MI350P provides a middle path that balances performance, cost, and deployment simplicity.

AMD’s broader enterprise AI portfolio reinforces that positioning. The company offers a range of compute options, from CPUs to PCIe accelerators to rack-scale systems. This portfolio allows customers to choose configurations that match their specific workloads and growth trajectories. Instead of forcing a single architecture, AMD supports a continuum of deployment models.

The combination of hardware, software, and market positioning reflects a deliberate strategy. AMD aims to lower the barriers to AI adoption while maintaining performance and scalability. The MI350P serves as a practical entry point for enterprises that want to expand AI capabilities without committing to large-scale infrastructure changes.

AMD’s strategy centers on practicality. The company recognizes that enterprises do not adopt AI in a single step. They expand incrementally, constrained by infrastructure, cost, and operational complexity. By delivering a solution that fits existing environments, AMD accelerates adoption while maintaining flexibility. The MI350P does not redefine AI infrastructure, but it removes a key obstacle, allowing organizations to move forward with fewer constraints and clearer economics.

What do we think?

AMD is executing with discipline and clarity. The MI350P aligns with real enterprise constraints rather than idealized architectures. Data center growth confirms demand, while the product extends its reach into a broader customer base. AMD still trails Nvidia in GPUs, but it leverages CPU strength and open ecosystems effectively. The strategy hinges on execution and supply, not vision.

The MI350P signals a potential inflection point in AI deployment. Enterprises no longer need to choose between cloud dependency and large-scale infrastructure rebuilds. Instead, they can scale AI within existing environments. This shift lowers adoption barriers and expands the addressable market for AI hardware. If demand continues to favor flexible, incremental deployment models, AMD’s approach could mark an inflection point in how organizations build and scale AI systems.

The AMD M1350P is included in the 230 AI Processors in our AIP tracker service, which is part of our AIP library of reports. This includes the AIP Quarterly update, the AIP Annual market report with spreadsheet, the Photonic AIP report, the Neuromorphic AIP report, and the FPGAs in AI report. If you have any interest in the 141 AIP companies and their products, we have the report for you.

IF YOU LIKE THIS ARTICLE, SHARE IT.