The history of programmable graphics is a story of gradual democratization—moving control from fixed hardware pipelines to code that developers write themselves. That shift, which began with Nvidia’s GeForce 3 and DirectX 8 in 2001, laid the technical foundation for everything that followed: general-purpose GPU computing, AI training, and the trillion-dollar AI infrastructure industry. Intel’s long and complicated journey to a competitive discrete GPU is a parallel story of missed windows, canceled projects, and a two-decade gap between ambition and market reality.

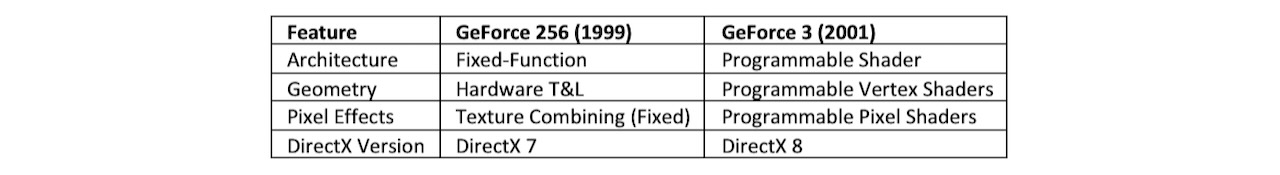

The programmable GPU era did not begin with the first announced GPU. Nvidia launched the GeForce 256 in 1999 and marketed it as the world’s first GPU—a chip that took transform and lighting (T&L) calculations off the CPU and handled them in dedicated hardware. That was a real advance, but the pipeline remained fixed-function: Developers could not write custom code to manipulate pixels or vertices. The hardware executed a predetermined sequence of operations with no deviation.

DirectX 8.0, released in November 2000, defined the next frontier. It introduced Shader Model 1.0, which specified programmable vertex and pixel shaders—code that developers could write to control exactly how geometry was transformed and how each pixel’s final color was computed. Nvidia’s GeForce 3, launched in 2001, was the first card to implement this in hardware through its nfiniteFX Engine. Vertex Shader 1.0 gave developers control over geometry at the per-vertex level; Pixel Shader 1.0 and 1.1 extended that control to per-pixel color and surface properties. The fixed-function era ended there.

Table 1. The transition from fixed to programmable shaders.

DirectX 9 arrived in 2002 with Shader Model 2.0, extending program length, adding conditional execution, and introducing HLSL—a C-like language that replaced the assembly-adjacent shader code of the DirectX 8 era and brought shader development within reach of a much wider developer base. DirectX 10, announced in 2006, brought geometry shaders, which could generate new vertices and primitives dynamically rather than just transforming existing ones. DirectX 11, released in 2009, introduced compute shaders, enabling general-purpose workloads to run directly on GPU hardware—the architectural foundation that would later underpin neural network training.

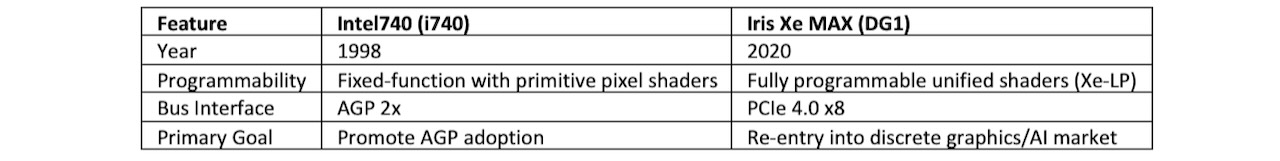

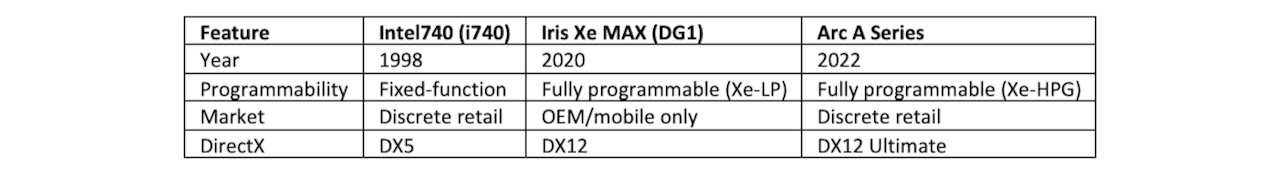

Intel’s path through this evolution was slower and more complicated. Intel’s first discrete graphics effort, the i740 in 1998, predated programmable shaders entirely. It accelerated 3D rendering during the DirectX 5 fixed-function era and served primarily as a vehicle for driving AGP adoption. It had no programmable shader units.

Table 2. Intel’s transition from fixed to programmable shaders.

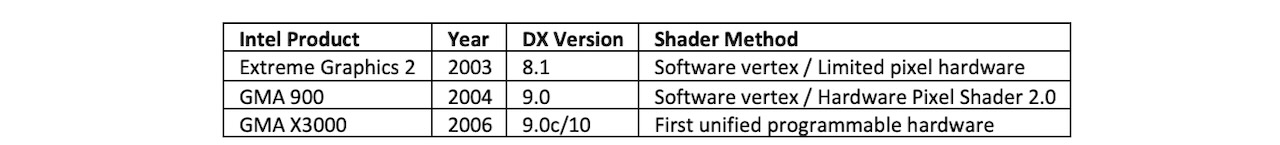

Intel’s first product with nominal DirectX 8 compatibility arrived in 2003 with the Extreme Graphics 2, integrated into the i865 and i875 chipsets. The compatibility came with a significant caveat: The hardware ran Pixel Shader 1.1 instructions through a driver-level wrapper over a fixed-function pixel pipeline, and vertex shaders ran entirely on the CPU through software emulation. Intel’s processor was technically programmable, which allowed DirectX 8 vertex shader requirements to be met—but at a CPU performance cost and without dedicated graphics hardware.

The first Intel product with dedicated hardware pixel shader units arrived in 2004 with the GMA 900, integrated into the 915G chipset and supporting DirectX 9 Pixel Shader 2.0 through four dedicated pixel pipelines—the first iGPU. Vertex shaders still ran on the CPU. Intel did not put a dedicated hardware vertex shader unit on a graphics chip until the GMA X3000 in 2006—five years after Nvidia’s GeForce 3 first demonstrated programmable vertex shaders in consumer hardware.

Table 3. The first wave of integrated GPUs (iGPUs).

Intel’s first genuine attempt at a discrete programmable GPU came with Larrabee in 2009—a massively parallel architecture designed to run DirectX 8 through 11 via software-defined pipelines rather than fixed hardware blocks. Larrabee was technically ambitious and commercially misaligned: It consumed too much power, ran games too slowly, and arrived when Nvidia and AMD had fully optimized hardware shader pipelines that Larrabee could not match. Intel canceled it as a consumer product and repurposed the architecture into the Xeon Phi HPC coprocessor, where its massive thread count made more sense.

Intel returned to discrete graphics in 2020 with the Iris Xe MAX, also known as DG1. Built on the Xe-LP architecture with 96 Execution Units and full DirectX 12 and OpenCL support, it represented Intel’s first modern unified shader architecture in a discrete package. DG1 reached only OEM and mobile configurations—it never shipped as a retail discrete card.

Table 4. Intel’s GPU evolution.

The Arc A Series, specifically the Arc A380 in 2022, became the first Intel discrete GPU sold at retail. It supported DirectX 12 Ultimate and brought Intel back into the consumer discrete market for the first time since the i740—24 years later.

What do we think?

The programmable shader story is also the AI story: Every major capability advance in GPU computing—general-purpose compute, neural network training, large model inference—runs on the programmable architecture that Nvidia introduced with GeForce 3 and DirectX 8 in 2001. Intel spent two decades trying to enter a market it helped define through DirectX, and finally succeeded commercially with Arc in 2022. The lesson for AI hardware is the same as it was for graphics: Programmability beats fixed-function pipelines when the workload keeps changing. In Part III, we cover the AI GPU story.

The transition from fixed-function to programmable shaders in 2001 was an inflection point that most observers missed until years later—because its consequences arrived gradually, through game engines, developer tools, CUDA, and a series of critical standards and APIs. The current shift from GPU-dominated AI training to heterogeneous inference architectures has the same shape. The inflection point isn’t a single product launch; it’s the moment programmability becomes the baseline expectation and the hardware that lacks it stops being competitive. In graphics, that happened between DirectX 7 and DirectX 8. In AI, it’s happening now.

Continue with this evolution: Read Part II here and Part III here.

LIKE WHAT YOU’RE READING? INTRODUCE US TO YOUR FRIENDS AND COLLEAGUES.