RISC-V is quietly becoming the connective tissue of AI hardware—from always-on embedded sensors to data center inference accelerators. Its open, modular ISA lets designers scale AI compute efficiently without vendor lock-in. Fixed-function cores handle simple classification tasks today; vector-extended clusters running alongside NPUs and GPUs already bridge edge AI and small multimodal models. Three distinct approaches now drive NPU integration directly into the RISC-V architecture itself, moving the ecosystem from CPU-plus-accelerator toward unified AI-native silicon.

RISC-V implementations now map quite closely to the expanding range of AI processor requirements. Fixed-function accelerators and DSP-class RISC-V cores are used for constrained workloads such as keyword detection, gesture recognition, and simple classification. As workloads become more demanding, hybrid designs that combine RISC-V CPUs, vector extensions, DSP functions, and NPUs can address vision processing, audio intelligence, and sensor fusion more efficiently than general-purpose cores alone.

The integration of RISC-V vector cores alongside GPUs or NPUs represents a structural shift. Combined architectures now enable on-device inference, edge AI, and small multimodal language models—workloads previously requiring cloud connectivity. This marks an inflection point where RISC-V hardware bridges deeply embedded systems and higher-order inference workloads. At the cognitive tier, chiplet-based SoCs and distributed multi-cluster architectures support real-time decision systems, adaptive robotics, and self-optimizing reasoning frameworks that require unified memory coherence across multiple dies or nodes.

Adapting NPUs directly to the RISC-V architecture is proceeding on three parallel tracks. The first and most established approach places a discrete NPU adjacent to a RISC-V CPU—effectively transplanting the traditional proprietary accelerator model into an open-ISA environment. That architecture works but carries an inherited limitation: The NPU and CPU communicate across a bus, introducing latency and memory bandwidth constraints. Semidynamics attacks this problem differently, introducing a RISC-V ISA-only compute engine that integrates CPU, vector, and tensor operations into a single compute element—eliminating the CPU-to-accelerator boundary entirely and targeting 8 to 64 TOPS for LLMs, deep learning, and edge AI. Academic research explores a third path: dynamic MAC sharing, where a tightly integrated NPU shares the CPU’s multiply-accumulate unit opportunistically when the RISC-V cores sit idle, achieving 1.87× speedup at 93.5% efficiency while cutting power 70% at low frequency—a compact solution for constrained edge silicon with no dedicated accelerator budget.

Figure 1. An AI processor using RISC-V cores along with vector extensions and NPU cores. (Source: JPR)

The most commercially significant NPU development is the MIPS S8200, now part of GlobalFoundries. The S8200 tightly couples RISC-V application cores with AI engines, enabling low-latency data exchange between general-purpose processing and inference, and supports transformer-class models alongside CNNs through MIPS-optimized compilers for PyTorch and TensorFlow. The software-first design philosophy allows developers to model and optimize inference workloads on a virtual platform before silicon exists—enabling hardware-software co-design from Day One. ForwardEdge ASIC, a Lockheed Martin subsidiary, has already selected the S8200 for a mission-critical autonomous platform ASIC, with first-silicon reference platforms expected in 2027. Academic frameworks such as PyTorchSim extend this further, modeling NPUs using a custom RISC-V ISA compiled through MLIR and LLVM and simulated via extended Gem5 and Spike tools—-giving researchers a common toolchain to evaluate NPU architectures before committing to silicon.

RISC-V vector extensions also connect divergent memory hierarchies-—from SRAM-based embedded implementations to disaggregated or pooled memory in semi-cognitive systems. Increasing intelligence drives more parallelism, tighter coherency requirements, and higher bandwidth demands. RISC-V’s modular ISA scales AI compute from intelligent sensors to distributed cognition-class systems while maintaining architectural consistency across that full spectrum.

Consolidation is reshaping the open-ISA landscape. Meta’s acquisition of Rivos brings RISC-V-based AI server design expertise into one of the world’s largest AI operators, signaling big tech’s intent to build proprietary silicon for LLM inference and multimodal workloads on open architecture. GlobalFoundries’ acquisition of MIPS Technologies aligns a once-competing ISA fully with RISC-V, adding foundry-level design support for open-architecture customers and strengthening the end-to-end supply chain. New entrants including Ahead Computing continue entering the ecosystem alongside these consolidations.

Andes Technology and SiFive remain important independent RISC-V IP suppliers, while China’s RISC-V activity continues to grow through companies and institutions such as Nuclei, Alibaba DAMO Academy, and StarFive. Europe, Japan, and China are also supporting open-ISA activity as part of broader technology-sovereignty and supply-chain-resilience strategies. That creates a slightly uncomfortable duality: RISC-V is an open global architecture, but its adoption is increasingly shaped by national and regional industrial policy.

What do we think?

RISC-V in AI hardware is moving from control-plane use into more central compute roles. The important point is not that RISC-V suddenly replaces GPUs, NPUs, or proprietary architectures. It is that RISC-V gives designers a common, extensible base on which CPUs, vector units, tensor engines, and software-defined accelerators can be brought closer together.

The market is now testing three broad models: a RISC-V CPU beside an NPU, a more unified RISC-V compute engine, and compact research-led approaches that share compute resources between CPU and accelerator. Each model has a place. The first is easiest to adopt, the second is more architecturally ambitious, and the third may suit tightly constrained edge devices.The GlobalFoundries/MIPS deal and Meta’s reported move for Rivos show that RISC-V is no longer just an academic or embedded curiosity. Foundries, IP companies, hyperscalers, and national ecosystems all see value in an open, extensible processor base. The risk is fragmentation. The opportunity is that RISC-V gives the AI hardware market a way to customize without starting from scratch every time.

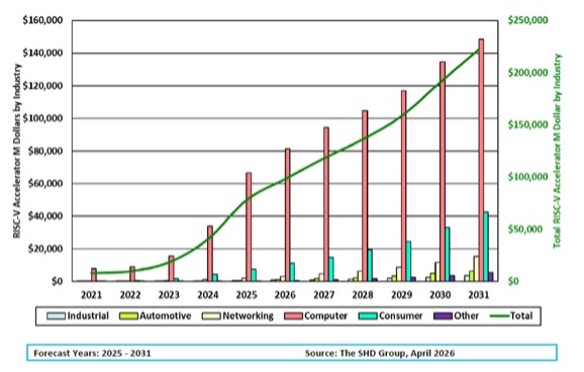

Figure 2. RISC-V for AI forecast (in $B) by industry. (Source: SHD Group)

RISC-V, therefore, does look like part of the next phase of AI silicon.

Proprietary ISAs defined the first two decades of AI hardware. The next decade will increasingly build on an open, extensible substrate—and RISC-V is that substrate.

We are tracking all these developments and more with our AI Processor Tracking Service and associated AIP reports. We have just announced a supplemental report on neuromorphic AI processors.

LIKE WHAT YOU’RE READING? TELL YOUR FRIENDS; WE DO THIS EVERY DAY, ALL DAY.