SambaNova and Intel showed a new heterogeneous inference architecture built specifically for agentic AI workloads. The system splits tasks across GPUs for prefill, SambaNova RDUs for decode, and Intel Xeon 6 CPUs for orchestration and tool execution. This partnership is designed to deliver efficient, production-ready infrastructure for complex coding agents and enterprise applications. The solution becomes available in the second half of 2026.

SambaNova and Intel expanded their collaboration to create a disaggregated inference solution optimized for agentic AI systems. The architecture combines three compute types: GPUs handle the highly parallel prefill stage, SambaNova SN50 RDUs manage the decode stage, and Intel Xeon 6 processors serve as both host CPUs and action CPUs for agentic tool calls, system orchestration, and integration with existing enterprise applications.

This design directly addresses the evolving requirements of agentic AI. Unlike simple chatbot token generation, agentic systems must interact with legacy applications, databases, APIs, and workflows that often run on x86 infrastructure for decades. CPUs play a critical role because they bridge AI agents with these established systems. SambaNova CEO Rodrigo Liang noted that AI is shifting from basic token output to broader use cases that require seamless integration with long-running enterprise software.

In SambaNova’s proposed setup, the entire decode phase runs on its RDUs to avoid shuttling activations between different accelerator types. This approach differs from some competing architectures that split decode stages across multiple hardware platforms. The RDUs operate on standard air cooling and consume less than 30 kW per rack, which provides significant deployment flexibility inside existing data centers.

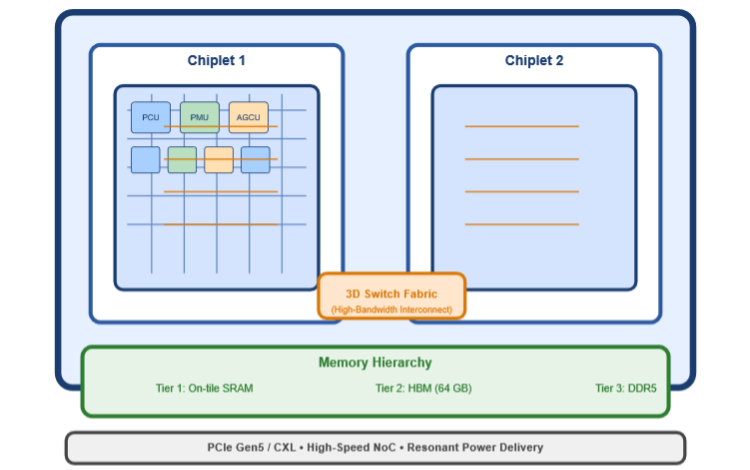

Figure 1. SambaNova SN50 RDU Reconfigurable Dataflow Unit – dual-chiplet design (5 nm) block diagram. (Source: JPR from SambaNova information)

The solution allows organizations to continue using their current GPU investments for the prefill phase, while deploying SambaNova RDUs for high-throughput, low-latency token generation. Intel Xeon 6 processors handle agentic coordination, code compilation, tool execution, and system-level control. SambaNova also standardizes on Xeon 6 as the host CPU for its SN50 RDU cards going forward.

Both companies are working together on software integration and system-level testing. The joint solution supports popular open-source inference frameworks such as vLLM and SGLang, as well as Nvidia’s NIXL library for standardized data transfers between heterogeneous components. This standardization helps simplify deployment of mixed-hardware environments.

The architecture targets enterprises, cloud providers, and sovereign AI programs that require production-scale agentic AI while maintaining strict data residency and security requirements. It deploys in conventional air-cooled data centers without the need for specialized liquid cooling or new power infrastructure. This makes the solution particularly suitable for financial services, healthcare, defense, and government applications.

Agentic AI workloads expose clear limitations in GPU-only stacks. While GPUs perform well in parallel prefill tasks, real-world agentic performance depends heavily on efficient decode and fast interaction with external tools and applications. The SambaNova-Intel design aims to optimize each stage of the pipeline with the most suitable hardware.

Figure 2. SambaNova’s SN50 RDU. (Source: SambaNova)

SambaNova’s SN50 RDU delivers strong decode performance with reconfigurable dataflow architecture. Intel Xeon 6 provides high memory bandwidth, dense PCIe lanes, and on-die accelerators that accelerate compilation and vector database operations. Together, the system promises improved end-to-end latency and better overall token economics for complex agentic workflows.

The full heterogeneous solution, including an integrated software stack and joint validation, will become commercially available in the second half of 2026.

What do we think?

SambaNova and Intel present a practical heterogeneous blueprint for agentic inference. The combination of GPUs, RDUs, and Xeon 6 processors addresses real production needs for interactivity and enterprise integration. While software optimization work continues, the architecture leverages existing data center infrastructure and reduces single-vendor dependency. This balanced approach improves both performance and deployability for large-scale agentic AI.

This partnership marks an inflection point in AI. Agentic systems reveal the limits of GPU-only infrastructure and accelerate the shift toward heterogeneous architectures that optimize each stage of the pipeline. By pairing GPUs for prefill, SambaNova RDUs for decode, and Xeon 6 CPUs for orchestration and tool execution, SambaNova and Intel demonstrate that future AI infrastructure will rely on specialized hardware working together rather than a single dominant accelerator. If widely adopted, this inflection point will drive tighter model-hardware co-design, lower long-term costs, and stronger integration between AI agents and enterprise applications.

With 138 companies, offering 188 AI processors, and all of the hypervisor powerhouses building their own AIPs, its difficult to figure out who is going to buy these amazing chips. But if you want to keep up to date on who’s doing what, including getting funding, introducing new parts, or being acquired, then you should subscribe to our AI Processor Tracking Service, which is available 24/7 and updated just about every day.

LIKE IT? WE’VE GOT LOTS MORE. TELL EVERYONE YOU KNOW. WE’D LOVE TO HEAR FROM YOU AND THEM.