Meta is continuing its quest in the metaverse and recently showed some research that uses photorealistic avatar representatives to conduct face-to-face conversations in virtual reality. On the back end, there is a good deal of work involved to achieve the lifelike codec avatar, but that process is being simplified and streamlined at Meta. Meanwhile, on the front end, things are simpler, achieved using a Meta Quest headset—in this case, the Meta Pro.

What do we think? Yes, we have all poked fun at Meta and its chief, Mark Zuckerberg, who has gone all in on the metaverse, so much so that he changed Facebook’s iconic branding to reflect that commitment. And then we waited. We chuckled at the company’s so-called updated 3D avatars in early 2022, which were customized cartoon characters. Later that year, Meta’s Horizon avatars went from moving in virtual space as if they were strapped to hoverboards, to sprouting legs—what an evolution. One year later, Zuckerberg stifled the snickers by showing something really extraordinary. It’s not fully realized yet, but it’s obviously well on its way—Hollywood-quality realistic avatars for communicating in VR.

Meta researchers working on new communication tech using lifelike avatars within VR space

In the popular space-age cartoon The Jetsons, which debuted in the 1960s, the family and others used a videophone to communicate with friends, family, coworkers, and so forth. In fact, the videophone was the quintessential device of the future, whether in Hollywood productions or in real-life concepts from Bell Telephone, AT&T, and others. The progression toward real-life videoconferencing spanned decades and was mostly a novelty; certainly, most did not believe it would become commonplace—until it did.

In a short time span, we progressed from telephone conferencing (no video) to full-blown real-time video and even videoconferencing using VR devices and avatars in a flash. In 2010, the iPhone began to popularize the video communication concept with FaceTime. Thanks to our computers (and the pandemic), Zoom calls won over the masses both inside and outside the workplace. Of course, there are other applications on the market as well, including WhatsApp, Facebook Messenger, Skype, Microsoft Teams, Discord, and more. Just when you were becoming comfortable with them, another next-generation communication concept looks to be on the horizon: face-to-face chats in VR between photorealistic avatars.

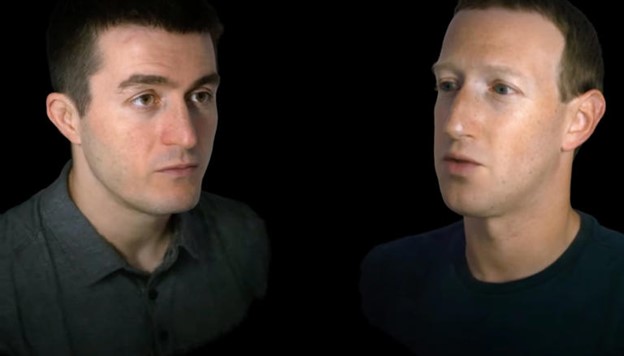

AI podcaster Lex Fridman gave us a glimpse of new technology that Meta (formerly Facebook) and its cofounder/CEO Mark Zuckerberg are working on. The face-to-face communication in the metaverse that they demonstrated does not entail a still photo or video of those communicating. Rather, the process involves avatars of the participants that are so photorealistic they indeed appear to be the real thing. The conversation takes place between the avatars within a shared virtual space, with the actual humans wearing a Meta Quest HMD and located many miles away.

Fridman called the experience and technology “incredible,” and describes the avatars as lifelike, going so far as to indicate they cross the uncanny valley, a concept described further in our DCC report. And after viewing the recording of the interview, his description was on the mark.

The photorealistic avatars, however, just didn’t appear with a simple click of a mouse. For an interview between Fridman and Zuckerberg using this technology, the head and shoulders of both men were digitally scanned, a process that required hours for each person and various cameras as they performed a multitude of expressions typical of this type of thorough scanning session for a digital double in film. Zuckerberg said the company is streamlining the process to where it will take just a few minutes using a smartphone.

For years now, Meta Reality Labs has been working on realistic avatars called codec avatars, which are generative models of 3D human faces with performances that can be rendered efficiently.

Meanwhile, the VR headsets track the facial expressions of the two conversationalists, focusing on their eye and mouth movements, which are key in realistic face-to-face communication. (While for this experiment the two used Meta Quest Pros, Meta plans to enable it on any Quest headset.) The motion data is then combined with audio captured from the headset, and that data then drives the person’s avatar, which is already loaded into the other person’s headset. According to Zuckerberg, there is virtually no lag time.

Meta introduced face, eye, and body tracking with the Quest Pro late last year.

Zuckerberg has not specified exactly when this feature will become available other than the company will begin adding it to its offerings in the next few years.

Meanwhile, Meta recently updated its Quest software to include additional avatar features. Although this gives users more customization capabilities in terms of skin tone, hair, clothing textures, and so forth, by no means does it come close to replicating photorealism like this demonstration does.