Synaptics and Google have put the first production implementation of Google’s open-source Coral NPU into silicon—the Astra SL2619 device inference AI SoC. The combination of Synaptics’ Torq inference engine, the MLIR-based open-source toolchain, and Google’s Gemma 3 270M model delivers a complete device inference AI stack on a single dev board. The platform targets AI/ML engineers, ODMs, and OEMs building always-on, battery-constrained devices where cloud off-load is not viable.

The Synaptics Coral Dev Board runs on the Astra SL2619 device inference AI SoC, integrating the 1 TOPS Torq inference engine—the first production implementation of Google’s open-source Coral NPU in silicon. Synaptics designed the SL2619 for ultra-low-power, always-on applications that use ambient sensing and require fast on-device inference (aka, edge) across battery-constrained form factors. The board delivers a full hardware interface set: CSI/DSI camera and display support, USB, microphone inputs, and Wi-Fi/BT connectivity via an M.2 expansion slot. Synaptics targets AI/ML engineers, system architects, ODMs, and OEMs who need an open, accessible platform for rapid prototyping.

Figure 1. SL2610 product line of SoCs—a high-level block diagram. (Source: Synaptics)

The Torq open-source toolchain uses MLIR-based compilation and supports popular ML frameworks, delivering a unified development flow from model optimization through deployment. Paired with Gemma—Google’s open-source device inference model family—the hardware-software stack gives developers a private, efficient foundation for device inference AI applications without cloud dependency. The Coral NPU and Torq toolchain are available now as part of the Astra SL2610 product line.

(Source: Synaptics)

Synaptics, in partnership with Grinn Global and RS Components, offers a limited-edition Coral Dev Board pre-configured with an out-of-box device inference AI experience running the Gemma 3 270M model. Grinn’s AstraSOM-2619 SoM powers the board, enabling immediate hands-on development of on-device generative and perception-based ML workloads without any additional setup.

What do we think?

Google open-sourcing the Coral NPU and Synaptics being first to implement it in production silicon is a meaningful signal. It breaks the proprietary NPU-toolchain lock-in that has fragmented service inference AI development for years. The MLIR-based Torq toolchain plus Gemma 3 gives ODMs a complete, vendor-neutral stack at 1 TOPS and ultra-low power—a combination that will accelerate device inference AI deployment in high-volume consumer and industrial devices.

The Synaptics-Google partnership marks an inflection point for device inference AI development. Google open-sourcing the Coral NPU and pairing it with the MLIR/IREE compiler stack removes the two biggest barriers to device inference AI adoption—proprietary silicon toolchains and fragmented framework support. When a production SoC ships pre-integrated with an open NPU, an open compiler, and an open model family like Gemma 3, the cost and complexity of building always-on AI devices drop materially. That dynamic accelerates the transition from cloud-dependent inference to sovereign on-device AI at scale.

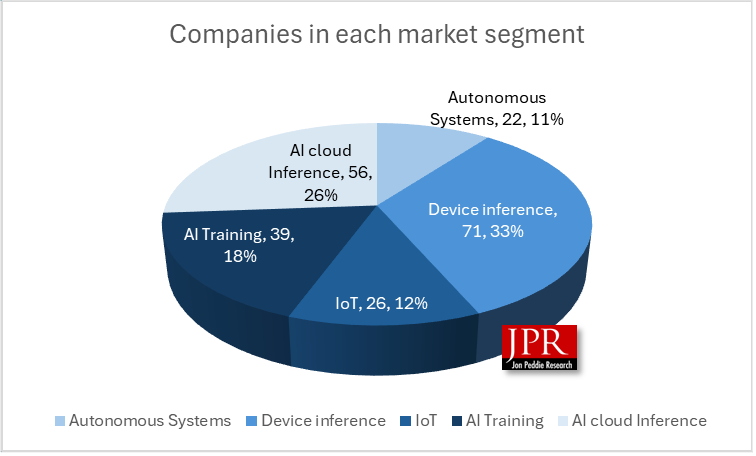

The device inference and IoT segments are where AI inferencing is headed, and as part of our 133 AI processor tracker, we have identified and profiled 26 IoT and 71 device inference suppliers.

Figure 2. AI processor market segments and suppliers.

AI processor information on market segments and more can be found in the JPR Annual AI Processor Market Development Report here. Or, reach out to Jon Peddie at [email protected].

WHATYA THINK? LIKE IT? DON’T BE STINGY, SHARE IT WITH YOUR FRIENDS.