The author reflects on his history with ray tracing and its impact on gaming. In 2014, he believed ray tracing would revolutionize mobile and consumer devices, but now, in 2023, he finds the reality different. Mobile ray tracing has not taken off due to a lack of games and compatible hardware. Consoles have some ray-tracing capabilities, but it’s computationally intensive and affects performance. Developers face a choice between using ray tracing or prioritizing a high frame rate, and gamers’ preferences seem to lean toward the latter. Some gamers are not convinced that the benefits of ray tracing outweigh the performance hit it causes.

I really wasn’t going to charge into this column like a four-polygon bull in a procedurally rendered china shop, but as soon as I mentioned that I had landed the cushiest job in GPU, my friend Alex asked me, “So, how long are you going to be able to avoid writing about ray tracing?”

Why is it even a question? Because ray tracing and I have history.

Back in 2014, I wrote that ray tracing would change the game “by bringing the next level of realism to mobile and consumer devices. Ray tracing is here, and it’s ready….” So many sighs. I was so young and hopeful then!

Zoom to 2023, and I was quoted on Pocket Gamer saying “games developers quite reasonably don’t want to create a bunch of content that’s reliant on ray tracing if there’s not a particularly high volume of phones with ray-tracing capabilities.”

The mobile ray-tracing story is a sad one, but all too simple to follow. No games, no hardware, and vice versa. Let’s not say it will never happen, but I’ve spent nearly a decade waiting for one side or the other to crack.

Bob Gardner, gaming guru at Netflix, recently told me bluntly: “The short answer is, I think this whole state is broken, it’s a ‘shitty, stable equilibrium,’ but I’m not sure how you can get around this due to business realities.”

“There’s next to nothing in the pipeline,” my friend [redacted], who works for a major mobile game developer, told me about 30 seconds ago.

Console at least has some ray tracing, but not much. Ray tracing is computationally intensive, so it can have a significant impact on performance. This is especially hard to balance on consoles, which have less powerful hardware than high-end PCs. We know the limits of the hardware aren’t going to change—expected life span of the modestly ray-tracing-enabled PS5 and Xbox Series X are until 2026 at least.

As a result, developers have been faced with choosing between using ray tracing or achieving a high frame rate. And for gamers, it’s Hobson’s choice: Enjoy what you get, or not, it’s all that’s coming. So, we are in this generation where not all console games support ray tracing, and even those that do, often only use it for a few select effects, or in low-stakes areas like replay modes.

Because it is currently challenging for consoles to provide both advanced light rendering and a smooth frame rate simultaneously, the PS5 and Xbox Series X consoles mostly allow gamers to choose between prioritizing high frame rate performance or ray tracing.

Which one do gamers choose? A quick hangout on the Gran Turismo Reddit seems to favor frame rate by a significant margin, and a sweep over the reviews of Resident Evil 4 Remake finds a lot of umming and aahing about the value of ray tracing. (Switch it off unless you have a VRR TV seems to be the consensus.) Over on EA’s forums, there are plenty of gamers complaining about games in which the ray tracing isn’t switchable.

It seems at least some gamers are not yet convinced that ray tracing is worth the performance hit.

“Oh come on, David,” you say, “the most important trend is what is happening with the console’s kissing cousin, [drumroll] the PC.” Sure, we saved the best for last, but don’t get too excited, ray-tracing fans!

Rock, Paper, Shotgun has identified 17 ray-tracing-enabled games on the way (as of July 17, 2023). That’s slightly less than the number that will use Nvidia’s Deep Learning Super Sampling 3 (DLSS 3), a technology that is complementary to ray tracing and which might enable next-gen versions of engine-specific features like Lumen and Nanite. Already, ray tracing is looking like it might be yesterday’s thing in PC too.

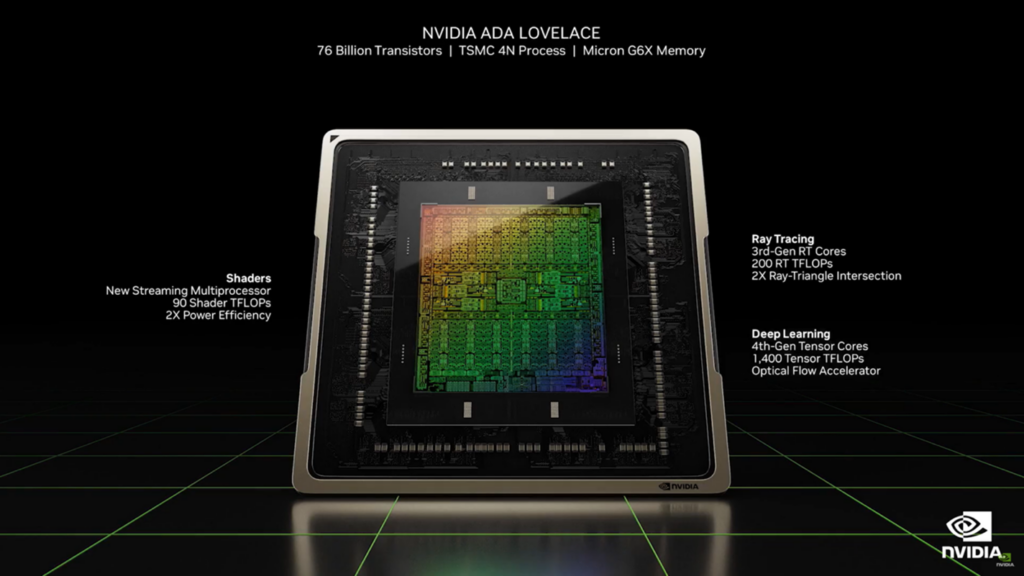

That possibility must be preying on the minds of the researchers working out what balance of GPU capability should go into next-generation platforms. Today, an Nvidia Ada Lovelace GPU has seven times more TFLOPS of deep learning performance than ray tracing.

That AI performance enables DLSS 3, with its ability to predictively generate frames that don’t have to be rendered by the GPU, to enhance frame rate and still (mostly) deliver image quality. While ray tracing comes with a frame rate cost, DLSS 3 comes with a frame rate benefit. Frame rate is the queen in gaming and has been for as long as I’ve been in the game.

Increasingly, we are going to see a breakaway from “traditional GPUs.” There is a desire to utilize the silicon area for other purposes such as ray tracing or AI. However, when we allocate a certain amount of space on a GPU chip for ray tracing or AI, it reduces the resources available for improving traditional GPU rendering performance (e.g., better/faster/higher resolution/higher refresh rate).

AI and super-resolution technology like DLSS compensates for this by allowing resources from FP32 FMAs (floating-point multiply and accumulate operations) to be allocated to other tasks, while still providing an equivalent, or even better, gaming experience.

AI can break the cycle of dependency between different technologies, as developers don’t need to put in as much effort to gain its benefits. This enables companies like Nvidia and AMD to gradually shift their investments toward AI—and potentially still leave room for experiments with ray tracing. This approach avoids the dilemma faced by mobile devices, in which investments in ray tracing may not provide significant value due to limited game support, whereas investments in AI offer multiple applications and can enhance existing content.

Many mobile devices have already dedicated substantial resources to AI acceleration and now need to support GPU innovation and investment shifts, rather than solely relying on brute-force methods. Additionally, with power limitations becoming more challenging at smaller process nodes, increasing the number of FMAs may not be an effective or feasible solution.

That sounds like a window for ray tracing still? Well….

The direction of future research seems to be toward two things. First, neural rendering. Neural rendering utilizes deep neural networks and physics engines to generate new images and video content from preexisting scenes. It empowers users to manipulate various scene elements like lighting and camera, geometry, shapes, and scene semantics. Second, next-generation generative AI, based on the processing capability of things like Nvidia’s Transformer Engine, which are driving a paradigm shift in machine learning and may help (game producers willing) enable game worlds that are profoundly realistic, not just visually, but experientially. How about a game that learns to see the world the way you do, and gradually adjusts to become your perfect gaming experience? Not out of the realms of possibility.

So, will there be more ray-tracing performance in the next generation of GPUs? In PC and console, sure. AI engines will enhance existing ray tracing, and we will see more TFLOPS devoted to ray tracing too, maybe pushing 50% more frame rate in PCs. In more constrained platforms? I think, we will see the far more versatile AI functionality favored over the rather single-benefit ray tracing. Mobile ray tracing remains elusive.

Overall, while definitely in the mix, ray tracing, I think, is going to take a back seat to AI. For a generation or two? Or permanently? I think the procedural nature of ray tracing, the potential for saved effort and greater realism, will bring it back to the fore down the line. But for now, it’s the perennial story: always the bridesmaid….