Qualcomm reported a strong fiscal second quarter, with revenue of $10.6 billion and non-GAAP EPS of $2.65, ahead of consensus. The real strength, however, came from diversification rather than handsets: Automotive revenue hit a record $1.3 billion, up 38% year over year, and Qualcomm expects to exit fiscal 2026 at an automotive run rate above $6 billion. The new talking point is custom silicon for a leading hyperscaler, with shipments expected later this calendar year. That puts Qualcomm into a crowded and increasingly lucrative ASIC market, where Broadcom, Arm, Google, Amazon, and others are already well established.

Qualcomm has a strong quarter and points to custom silicon. (Source: Qualcomm)

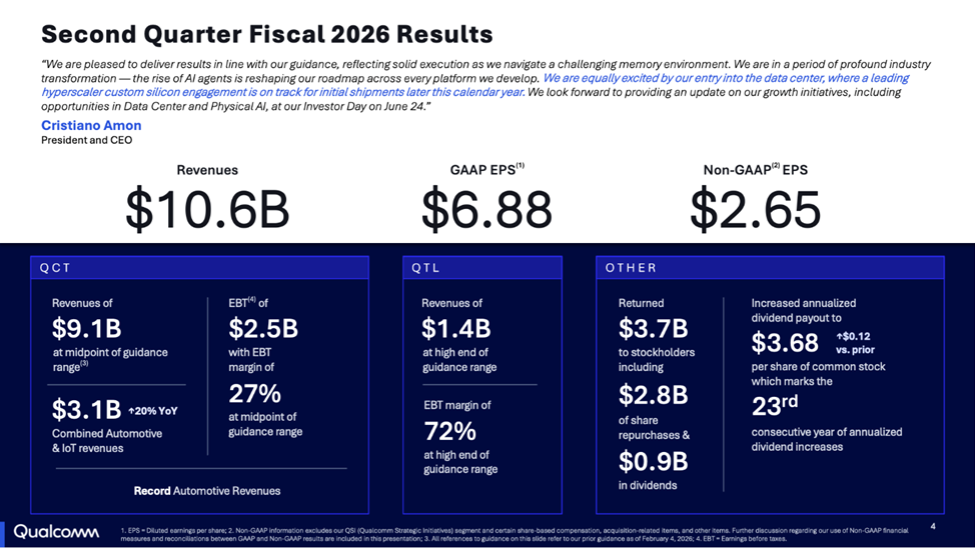

Qualcomm used its fiscal second-quarter earnings call to draw a line between the company it has been and the company it wants investors to see next. Revenue was $10.6 billion, and non-GAAP EPS was $2.65, slightly ahead of the Street’s revenue expectations and comfortably ahead on EPS. QCT revenue was $9.1 billion, with licensing at $1.4 billion. The headline, however, was diversification.

First, automotive. Qualcomm reported QCT automotive revenue of $1.3 billion, up 38% year over year, driven by digital cockpit and ADAS programs moving to its fourth-generation Snapdragon Digital Chassis platforms. The company said it had exceeded $5 billion in annualized automotive revenue for the first time and expects to exit fiscal 2026 at a run rate above $6 billion.

Next, handsets, which remain the core business, are no longer the only proof point investors care about. Qualcomm is now selling a broader compute story that starts in phones, moves through PCs and cars, and ends in data centers, robots, factories, and sovereign AI infrastructure.

The new element is the data center custom ASIC engagement. CEO Cristiano Amon said Qualcomm is entering the data center with “a leading hyperscaler custom silicon engagement” on track for initial shipments later this calendar year. CFO Akash Palkhiwala repeated the point in prepared remarks, saying Qualcomm now expects initial shipments for that engagement later in calendar 2026.

Amon did not give the customer’s name or product details, but he gave enough to place the order. Asked whether the engagement was a CPU, accelerator, or networking chip, he said Qualcomm had been building the necessary assets: CPU, accelerator, memory architecture, and custom ASIC capability. He then linked the move directly to Alphawave, saying Qualcomm had added “a lot of capabilities for custom ASIC with the acquisition of Alphawave and connectivity.”

Qualcomm is not presenting this as a one-off chip sale. Amon described the customer as a large hyperscaler and said Qualcomm is thinking about it as a “multi-generation engagement.” Later, when asked whether Qualcomm was shifting toward ASICs rather than merchant data center chips, he said the answer was “all of the above,” adding that Qualcomm expects to play in merchant and custom silicon, with different configurations of its IP blocks for different customers.

The cloud AI story began before this quarter

This is not Qualcomm’s first move on entering cloud inference. We covered the October 2025 launch of Qualcomm’s AI200 and AI250 as the company’s move into AI cloud-based inference, detailing these Arm-based inference engineswith DSP-based NPU technology and near-memory computing. The AI200 and AI250 were announced on October 27, 2025, with availability planned for 2026 and 2027.

Qualcomm’s own description of AI200 is centered on inference economics. AI200 is a rack-level AI inference solution using Hexagon NPU technology, direct liquid cooling, PCIe for scale-up, Ethernet for scale-out, confidential computing, and 768 GB of LPDDR memory per card. AI250 adds a near-memory-compute architecture and is positioned for much higher effective memory bandwidth and lower power.

The hyperscaler custom ASIC is likely built on capabilities Qualcomm has already been assembling in public. AI200 and AI250 gave Qualcomm a rack-scale inference platform. Alphawave gave it high-speed connectivity, chiplet, and custom silicon capabilities. Oryon gives it a high-performance CPU story. Hexagon gives it an accelerator lineage. None of that guarantees success in hyperscaler ASICs, but it makes the announcement more credible than it would have been two years ago.

Qualcomm is also trying to frame inference as part of the AI infrastructure where its heritage matters. Training has been GPU-led. Inference at scale is more sensitive to power, memory bandwidth, latency, utilization, and cost per token—a more natural pitch for a company that has spent decades optimizing compute under power and thermal constraints. Whether that experience transfers cleanly to hyperscale data centers, where the integration problem extends far beyond the chip, is the real test.

The ASIC market is getting crowded

Qualcomm is entering custom silicon at a time when the hyperscaler ASIC market is both attractive and increasingly difficult. Nvidia remains dominant in AI infrastructure, but large cloud companies want lower cost, tighter workload optimization, more supply assurance, and more control over their roadmaps. That has driven Google’s TPUs, AWS Trainium, Microsoft Maia, Meta MTIA, and a wave of co-designed chips built with merchant ASIC partners.

To understand the pressure on the market, we say look at the competitive landscape around Nvidia GPUs, Google TPUs, and AWS Trainium. GPUs are still the default AI silicon, but the biggest buyers are now building or backing alternatives too.

Broadcom is the company Qualcomm must be measured against in custom AI ASICs. In March, Broadcom predicted its AI chip revenue surpassing $100 billion by 2027, helped by custom-chip demand from large technology companies. Broadcom has become the backend and connectivity-heavy partner many hyperscalers trust for turning AI accelerator concepts into manufacturable silicon and deployable systems.

Arm is also moving from IP supplier toward silicon. In March 2026, Arm announced the AGI CPU, its first Arm-designed data center CPU for AI infrastructure, expanding beyond IP and compute subsystems into production silicon. Reuters reported that Meta is the lead partner, with other customers including OpenAI, Cloudflare, SAP, and SK Telecom, and that Arm expects the product to become a multibillion-dollar annual business.

Qualcomm’s opportunity is real, but the competition is strong, too. Broadcom has deep ASIC credibility and major AI infrastructure relationships. Marvell remains active in custom silicon. Google and Amazon have internal chip programs. Microsoft and Meta are pushing their own silicon efforts. Arm is now willing to sell chips, not just designs. Nvidia is not standing still, and its software ecosystem still matters.

What do we think?

This was a strong quarter for Qualcomm, but the custom ASIC announcement should not distract from what actually carried the results. Automotive did the heavy lifting. A 38% year-over-year increase to $1.3 billion is not a footnote—it is the clearest evidence that Qualcomm can build durable businesses beyond phones. The company’s target of exiting fiscal 2026 at an automotive run rate above $6 billion gives the market something tangible to measure.

The handset business is still huge and still vulnerable to cycles it cannot fully control. This quarter’s commentary around memory pressure and cautious China handset builds made that clear. Qualcomm has better visibility than most through its licensing business, but visibility is not immunity. The diversification story matters because the core market is mature and noisy.

The hyperscaler ASIC engagement is notable because it suggests Qualcomm is no longer just talking about data center adjacency. It has a customer, a product, and a shipment window. The technical base is credible: Oryon, Hexagon, Alphawave connectivity, and the memory architecture developed for AI200 and AI250 are pieces that can plausibly be assembled into custom silicon for a cloud customer.

Hyperscaler ASIC programs are long, expensive, and unforgiving. They require yield, packaging, firmware, compilers, board design, rack integration, supply chain execution, and a customer willing to commit across generations. Amon’s “multi-generation” language is encouraging, but it raises the stakes as much as it signals confidence. One chip does not make a successful platform.

Qualcomm knows power-efficient heterogeneous compute better than almost anyone. The data center ASIC market, however, already has incumbents with deep customer entanglement. Broadcom is no longer merely a supplier; it is becoming an infrastructure co-architect to the hyperscalers. Arm’s move into production silicon adds another complication, particularly because Qualcomm’s own CPU story sits on an Arm architecture license.

Automotive revenue is proving the diversification thesis. IoT is contributing ground. AI inference, backed by the AI200/AI250 platform, gives the company a credible wedge into hyperscaler infrastructure. Alphawave fills a gap it could not easily have built from scratch. But Qualcomm is now walking into a knife fight with Broadcom, Arm, Nvidia, cloud in-house silicon teams, and every other company that has discovered the magic words “custom AI accelerator.”

Qualcomm has earned the right to be taken seriously in this market. It has not yet earned the right to be assumed successful. It needs now to go beyond narrative and show architecture, customer logic, software maturity, and a credible path from first hyperscaler shipment to repeatable business.

WHATYA THINK? LIKE IT? DON’T BE STINGY, SHARE IT WITH YOUR FRIENDS.