Arcturus aims to enhance accessibility and versatility within volumetric technology by incorporating the Mixed Reality Capture Studios (MRCS) tools they’ve taken over from Microsoft. We explore several areas of development for capture, including improving image quality, capturing more with fewer sensors, and making surfaces look more realistic and responsive to lighting and view changes. In the next few years, expect a range of capture solutions from spatial cameras on phones to multi-device swarm capture scenarios for large locations. It is uncertain how long it will take for single-camera capture to compete with traditional capture, but next-generation single-sensor solutions are expected to make use of temporal data and moving sensors to improve quality. The value and utility of performances authentically captured from a wide range of views will be different from those with significant hallucination (plausible, but not fully captured), creating a distinction in the commercial market.

What do we think? Arcturus is even more of a player now. The company has taken over MRCS from Microsoft, but that’s not the limit of its interest. The company gives useful insight into the technical challenges that remain to be solved and the potential of single camera capture. We will be speaking to them again as they make progress.

We spoke to Arcturus’ CPO and CTO to get their insights.

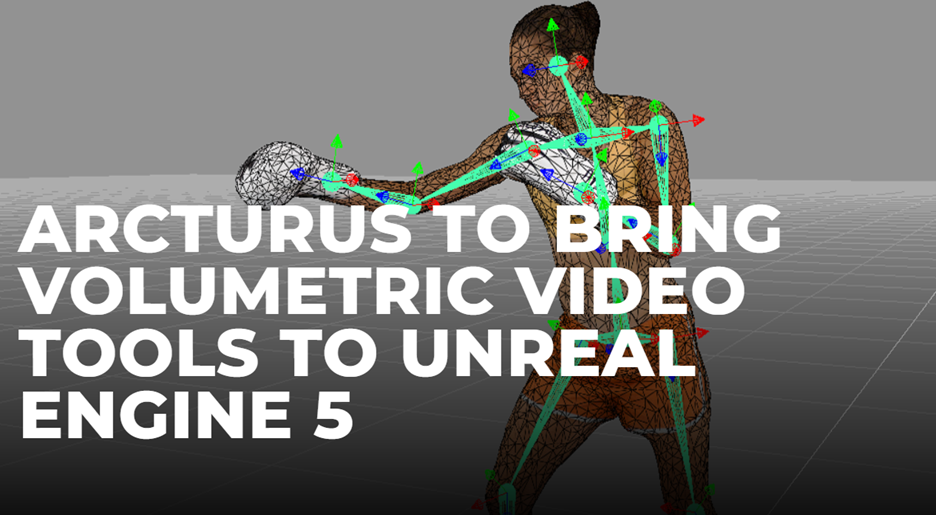

We recently covered how Arcturus, a company specializing in volumetric video technology has taken over Microsoft’s Mixed Reality Capture Studios (MRCS) solution. The ‘meat’ of the deal was the tech transfer of Microsoft’s mixed reality capture stage technology to Arcturus, enabling them to offer an all-encompassing solution aimed at enhancing accessibility and versatility within volumetric technology. MRCS partners are now working directly with Arcturus.

At the time, Kamal Mistry, CEO of Arcturus told us “Volumetric video is growing rapidly, it has begun to change the way people look at virtual production, mixed reality, music, and sports, and really any format that can benefit from 3D free-viewpoint video. By adding the MRCS tools, we can accelerate the work we began more than a decade ago, and evolve the pipeline to offer new solutions, designed to help to bring volumetric video to everyone.”

We were intrigued and spoke to Arcturus’ Chief Product Officer Steve Sullivan and CTO Devin Horsman to find out more.

JPR: What do you think is the longer-term direction of development for capture?

Devin Horsmanto: We are interested in how close we can get the cameras before the image begins to break down. We also want to get higher quality from fewer sensors. Ideally, we will be able to capture more using less hardware, and we can fill those gaps through prior experience, i.e., machine learning. We are working to make surfaces look even more realistic and make them more responsive to lighting or view changes. This involves estimating the material properties of surfaces. This is also represented in areas like glasses, hair, fine clothing, and prop details, all of which can be challenging. Other areas we are actively developing solutions for include altering or blending performances while staying true to the performer.

JPR: With AI and the ability to create avatars, etc., where do you think we will be in three-to-five years?

Steve Sullivan,: In three-to-five years, we expect a spectrum of capture solutions, and they will be satisfying a wide range of scenarios. We have already seen fast growing adoption of spatial cameras on phones, and we expect that will quickly expand to webcams, laptop cams, camcorders, professional cameras, etc. For large locations, we believe phones with multi-sensor capabilities will permit multi-device swarm capture scenarios.

JPR: What will be the most important areas for technical development?

Devin: We believe five of the most important areas for technical development are (in no particular order):

- Turnkey capture from a wide range of devices

- Easy access to processing the image, no matter how it was captured

- Visual quality improvements, both in resolution and richness

- Ability to alter or mix performances while staying true to the performer’s motion and style

- Leveraging AI on multiple fronts, for example,

- Apply image based machine learning (ML) to inpainting and super resolution

- Significantly improve compression and topological stabilization from ML driven deformation models

- Generating more plausible blends and secondary motion for edited clips

- Improved likenesses for modified volumetric data (e.g., in dubbing, sfx, stunts), in final render with per-actor deepfake-style appearance models applied after edits to regenerate realistic surface appearances.

Steve: Single camera capture technology—like Volograms—is currently supported by HoloSuite, and we’ve already successfully completed several ad agency projects collaborating with them. While single camera capture is possible and useful in certain use cases, the highest quality captures record performances with many cameras, in some cases, well over 100.

JPR: How long before single camera can compete with traditional capture?

Devin: We can’t say for certain, but from a technical viewpoint we believe that next generation single sensor capture solutions will make use of temporal data and moving sensors. They will work to decompose the motion of surfaces in a scene based on the motion of the camera. Surfaces seen earlier will be remembered when the camera is seeing another angle of the subject.

Steve: It’s likely that single camera volumetric solutions will be adopted soon due to price and access, well before their quality approaches current commercial offerings. Adoption—in scenarios where a full 360 view isn’t critical—will also grow as consumers find its value and become accustomed to these kinds of experiences. A solid 180-degree view and then a plausible version of the other 180 degrees would suffice, like a hallucination. Over time, that 180-degree hallucination will improve, but there will always be a distinction between a performance that was authentically captured from a wide range of views, versus one that had significant 180-degree hallucination. We believe this will be a distinction in the commercial market, with “authentically captured” performances having different value and utility than those that have significant hallucination, but otherwise perfectly plausible for that performance.